Projects

Shape2Vec: semantic-based descriptors for 3D shapes, sketches and images

Abstract

Convolutional neural networks have been successfully used to compute shape descriptors, or jointly embed shapes and sketches in a common vector space. We propose a novel approach that leverages both labeled 3D shapes and semantic information contained in the labels, to generate semantically-meaningful shape descriptors. A neural network is trained to generate shape descriptors that lie close to a vector representation of the shape class, given a vector space of words. This method is easily extendable to range scans, hand-drawn sketches and images. This makes cross-modal retrieval possible, without a need to design different methods depending on the query type. We show that sketch-based shape retrieval using semantic-based descriptors outperforms the state-of-the-art by large margins, and mesh-based retrieval generates results of higher relevance to the query, than current deep shape descriptors.

Video

Bibtex

@article{Tasse2016,

author = {Flora P. Tasse and Neil A. Dodgson},

title = {Shape2Vec: semantic-based descriptors for 3D shapes, sketches and images},

journal = {ACM Transactions On Graphics},

year = {2016},

url = {http://www.cl.cam.ac.uk/research/rainbow/projects/shape2vec},

}

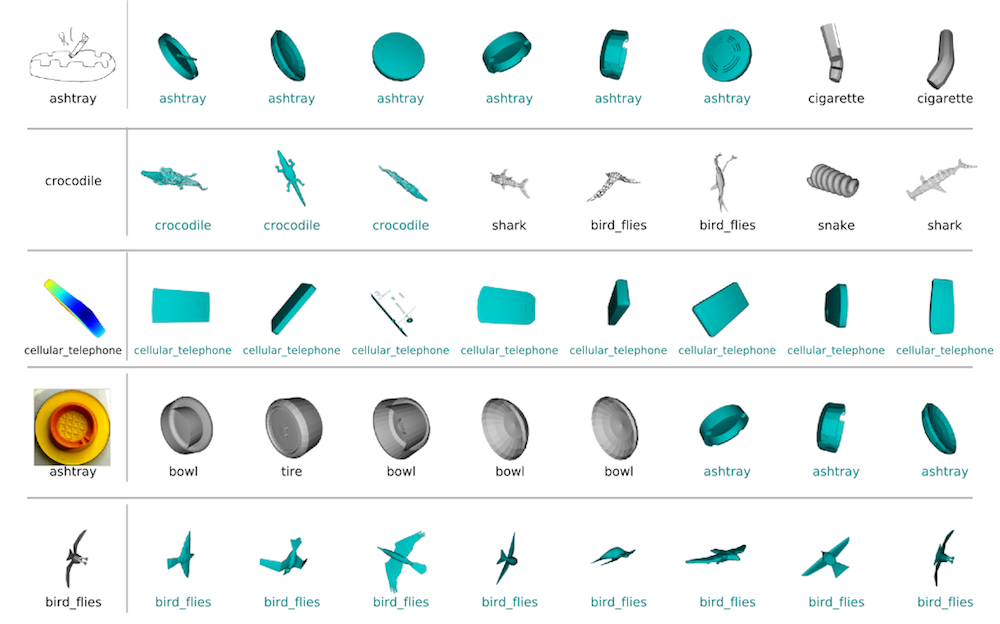

Cross-modal shape retrieval examples using different input modalities. From the top: a sketch, a word, a synthetic depthmap, a natural image [Russakovsky et al. 2015] and a 3D model query.

Cross-modal shape retrieval examples using different input modalities. From the top: a sketch, a word, a synthetic depthmap, a natural image [Russakovsky et al. 2015] and a 3D model query.