Projects

FovVideoVDP: A visible difference predictor for wide field-of-view video

Rafał K. Mantiuk(1), Gyorgy Denes(1,2), Alexandre Chapiro(2), Anton Kaplanyan(2), Gizem Rufo(2), Romain Bachy(2), Trisha Lian(2), and Anjul Patney(2).

(1)University of Cambridge, (2)Facebook Reality Labs

Presented at SIGGRAPH 2021, Technical Papers

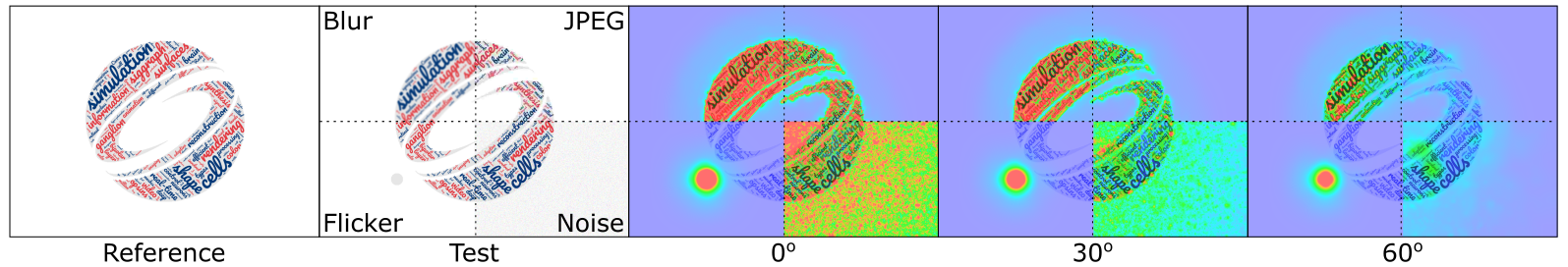

The heat-maps show our metric’s predictions for 4 types of distortions (blur, JPEG compression, 30 Hz flicker and Gaussian additive noise) at three different eccentricities. The flicker is simulated as a dot that appears in every second frame. All types of artifacts are predicted to be much less noticeable when seen with peripheral vision at large eccentricities.

Abstract

FovVideoVDP is a video difference metric that models the spatial, temporal, and peripheral aspects of perception. While many other metrics are avail- able, our work provides the first practical treatment of these three central aspects of vision simultaneously. The complex interplay between spatial and temporal sensitivity across retinal locations is especially important for displays that cover a large field-of-view, such as Virtual and Augmented Reality displays, and associated methods, such as foveated rendering. Our metric is derived from psychophysical studies of the early visual system, which model spatio-temporal contrast sensitivity, cortical magnification and contrast masking. It accounts for physical specification of the display (luminance, size, resolution) and viewing distance. To validate the metric, we collected a novel foveated rendering dataset which captures quality degra- dation due to sampling and reconstruction. To demonstrate our algorithm’s generality, we test it on 3 independent foveated video datasets, and on a large image quality dataset, achieving the best performance across all datasets when compared to the state-of-the-art.

Video

Paper overview (3 mins)

SIGGRAPH'21 talk (19 mins)

Materials

- Paper:

FovVideoVDP: A visible difference predictor for wide field-of-view video

Rafał K. Mantiuk, Gyorgy Denes, Alexandre Chapiro, Anton Kaplanyan, Gizem Rufo, Romain Bachy, Trisha Lian, and Anjul Patney.

In: ACM Transactions on Graphics (Proc. of SIGGRAPH 2021), 40(4), article no. 49, 2021

[paper PDF] - Supplementary materials [PDF]

- Code [Github]

- Comparison of quality metrics [link]

This is a detailed report comparing the performance of quality metrics, including additional metrics that could not be included in the main paper. - Results for synthetic distortions [link]

The synthetic test cases reveal how metrics behave given some standard test conditions, such as increasing amount of contrast masking, or Gabors of different frequencies. - Ablation studies:

Related projects

- ColorVideoVDP - Color Video Visual Difference Predictor

- DPVM - Deep Photometric Visual Metric

- HDR-VDP - A Visual Difference Predictor for High Dynamic Range Images

Acknowledgement

This project has received funding from the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation programme (grant agreement No 725253 - EyeCode).