Projects

Perceptual Model for Adaptive Local Shading and Refresh Rate

Akshay Jindal(1), Krzysztof Wolski(2), Karol Myszkowski(2), and Rafał K. Mantiuk(1).

(1)University of Cambridge, (2)Max-Planck-Institut für Informatik

To be presented at SIGGRAPH Asia 2021, Technical Papers

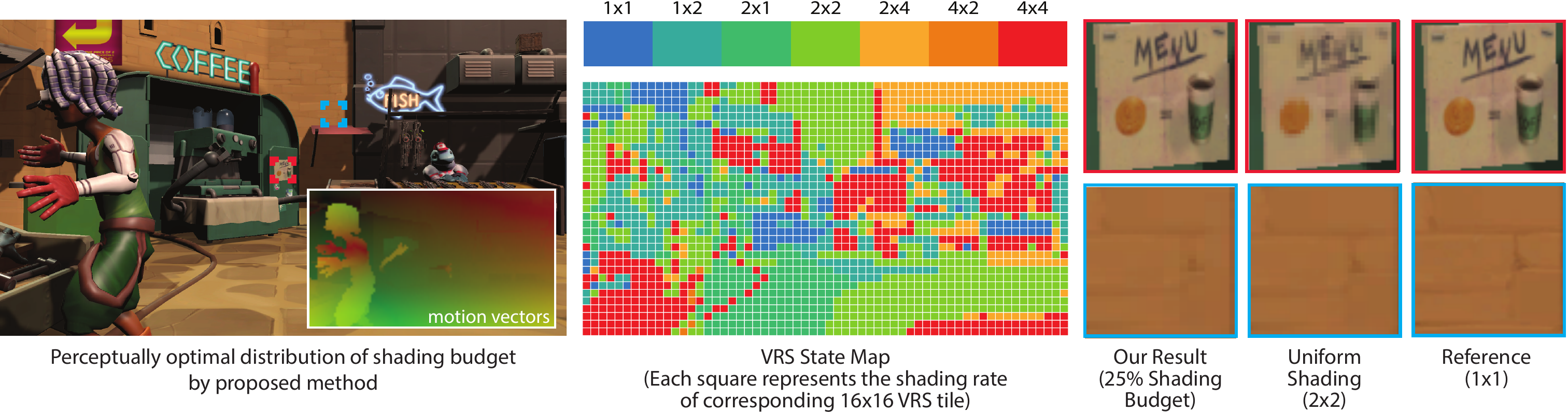

We propose a novel method for adaptive shading under budget constraint that analyzes the display setup and content motion to optimally distribute the shading budget in real-time. For a scene with a moving first-person camera rendered with a fixed shading budget of 25% pixels/frame, our model assigns higher shading rate to regions where quality degradation due to VRS is more visible and lower shading rates to less perceptible regions.

Abstract

When the rendering budget is limited by power or time, it is necessary to find the combination of rendering parameters, such as resolution and refresh rate, that could deliver the best quality. Variable-rate shading (VRS), introduced in the last generations of GPUs, enables fine control of the rendering quality, in which each 16x16 image tile can be rendered with a different ratio of shader executions. We take advantage of this capability and propose a new method for adaptive control of local shading and refresh rate. The method analyzes texture content, on-screen velocities, luminance, and effective resolution and suggests the refresh rate and a VRS state map that maximizes the quality of animated content under a limited budget. The method is based on the new content-adaptive metric of judder, aliasing, and blur, which is derived from the psychophysical models of contrast sensitivity. To calibrate and validate the metric, we gather data from literature and also collect new measurements of motion quality under variable shading rates, different velocities of motion, texture content, and display capabilities, such as refresh rate, persistence, and angular resolution. The proposed metric and adaptive shading method is implemented as a game engine plugin. Our experimental validation shows a substantial increase in preference of our method over rendering with a fixed resolution and refresh rate, and an existing motion-adaptive technique.

Video

Paper overview (4 mins)

SIGGRAPH Asia'21 talk(15 mins)

Materials

- Paper:

Perceptual Model for Adaptive Local Shading and Refresh Rate

Akshay Jindal, Krzysztof Wolski, Rafał K. Mantiuk, and Karol Myszkowski

In: ACM Transactions on Graphics (Proc. of SIGGRAPH Asia 2021), 40(6), article no. 280, 2021

[paper PDF] - Supplementary materials [PDF]

- Code [Github]

Related projects

- A perceptual model of motion quality for rendering with adaptive refresh-rate and resolution

- Temporal Resolution Multiplexing: Exploiting the limitations of spatio-temporal vision for more efficient VR rendering

Acknowledgement

This project has received funding from the European Union’s Horizon 2020 research and innovation programme under the Marie Skłodowska-Curie grant agreement No 765911 (RealVision) and under the European Research Council (ERC) Consolidator Grant agreement No 725253 (EyeCode)