Projects

Distilling Style from Image Pairs for Global Forward and Inverse Tone Mapping

The 19th ACM SIGGRAPH European Conference on Visual Media Production

Best Paper Award

Abstract

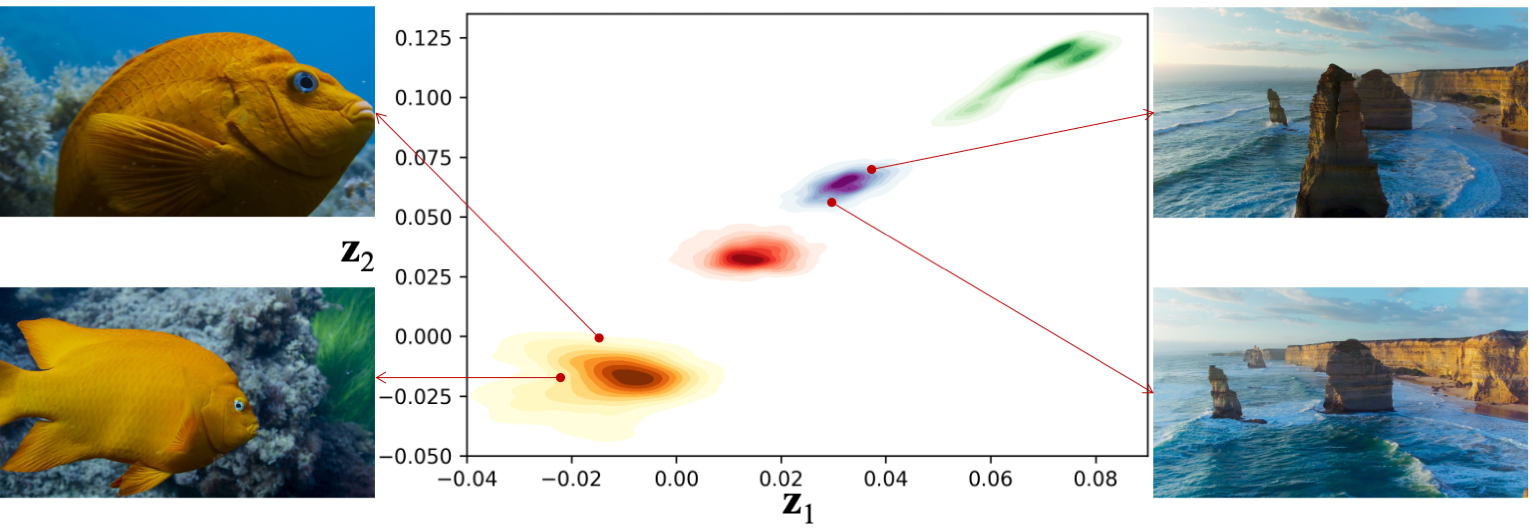

Many image enhancement or editing operations, such as forward and inverse tone mapping or color grading, do not have a unique solution, but instead a range of solutions, each representing a differentß style. Despite this, existing learning-based methods attempt to learn a unique mapping, disregarding this style. In this work, we show that information about the style can be distilled from collections of image pairs and encoded into a 2- or 3-dimensional vector. This gives us not only an efficient representation but also an interpretable latent space for editing the image style. We represent the global color mapping between a pair of images as a custom normalizing flow, conditioned on a polynomial basis of the pixel color. We show that such a network is more effective than PCA or VAE at encoding image style in low-dimensional space and lets us obtain an accuracy close to 40\,dB, which is about 7-10 dB improvement over the state-of-the-art methods.

Downloads

Results

We provide a comprehensive comparison of qualitative results for different datasets, namely, BBC Planet Earth II Episode 3 - Jungles, BBC Blue Planet II Episode 5 - Green Seas, The Lego Batman Movie and the MIT-Adobe 5K dataset. You can toggle between the predicted and the target image by clicking on the images. Moreover, you can use the arrow buttons to sort the columns in increasing or decreasing order of the metrics.

Contact

Please contact Aamir Mustafa or Rafał K. Mantiuk with any questions regarding the method.

Acknowledgements

This project has received funding from the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation programme (grant agreement N◦ 725253–EyeCode).