Projects

A perceptual model of motion quality for rendering with adaptive refresh-rate and resolution

Gyorgy Denes, Akshay Jindal, Aliaksei Mikhailiuk, Rafał K. Mantiuk

SIGGRAPH 2020, Technical Papers

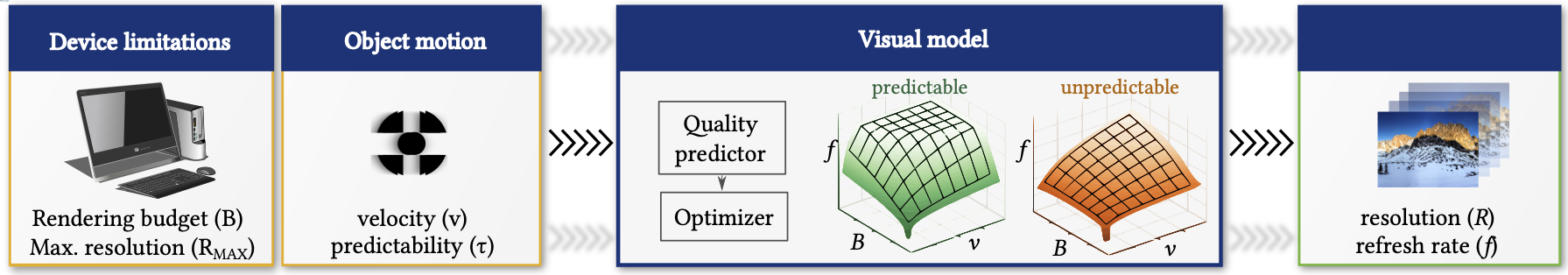

Our proposed perceptual model of motion quality takes object motion, refresh rate and device limitations (such as the rendering budget and the maximum screen resolution) to predict the perceived quality. This model can be then used to find the combination of resolution and refresh rate that produces the highest animation quality under the given conditions. Surface plots visualize model predictions.

Abstract

Limited GPU performance budgets and transmission bandwidths mean that real-time rendering often has to compromise on the spatial resolution or temporal resolution (refresh rate). A common practice is to keep either the resolution or the refresh rate constant and dynamically control the other variable. But this strategy is non-optimal when the velocity of displayed content varies. To find the best trade-off between the spatial resolution and refresh rate, we propose a perceptual visual model that predicts the quality of motion given an object velocity and predictability of motion. The model considers two motion artifacts to establish an overall quality score: non-smooth (juddery) motion, and blur. Blur is modeled as a combined effect of eye motion, finite refresh rate and display resolution. To fit the free parameters of the proposed visual model, we measured eye movement for predictable and unpredictable motion, and conducted psychophysical experiments to measure the quality of motion from 50Hz to 165Hz. We demonstrate the utility of the model with our on-the-fly motion-adaptive rendering algorithm that adjusts the refresh rate of a G-Sync-capable monitor based on a given rendering budget and observed object motion. Our psychophysical validation experiments demonstrate that the proposed algorithm performs better than constant-refresh-rate solutions, showing that motion-adaptive rendering is an attractive technique for driving variable-refresh-rate displays.

Video

SIGGRAPH fast-forward video

Full explainer video

Materials

- Paper [Paper uncomp (27MB)]/[Paper comp (5MB)]/[Supplementary]

A perceptual model of motion quality for rendering with adaptive refresh-rate and resolution

Gyorgy Denes, Akshay Jindal, Aliaksei Mikhailiuk and Rafał K. Mantiuk.

In: ACM Transactions on Graphics (Proc. of SIGGRAPH 2020), in print, 2020

- Code [Github]

Related projects

- Perceptual Model for Adaptive Local Shading and Refresh Rate

- Temporal Resolution Multiplexing: Exploiting the limitations of spatio-temporal vision for more efficient VR rendering

Acknowledgement

This project has received funding from the European Research Council (ERC) under the European Union’s Horizon 2020 research and innovation programme (grant agreement N◦ 725253–EyeCode), under the Marie Skłodowska-Curie grant agreement N◦ 765911 (RealVision), and from the EPSRC research grant EP/N509620/1.