Projects

This is a selection of the major projects. A complete list of publications can be found here.

We have measured what is the maximum resolution (spatial frequency) the human eye can see and how it translates to the displays resolutions. We can determine the resolution at which we can perceive no improvement in image quality.

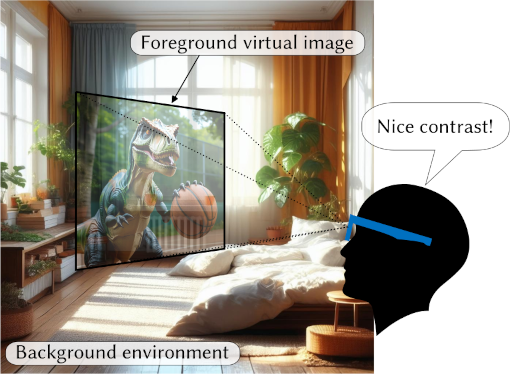

Supra-threshold Contrast Perception in Augmented Reality

Supra-threshold Contrast Perception in Augmented RealityIn optical see-through AR displays, image contrast is much lower than traditional displays due to mixed background light, yet images appear sharper than expected. We explain this effect with a model that describes supra-threshold contrast perception across luminance levels, informing better AR display algorithms and hardware design.

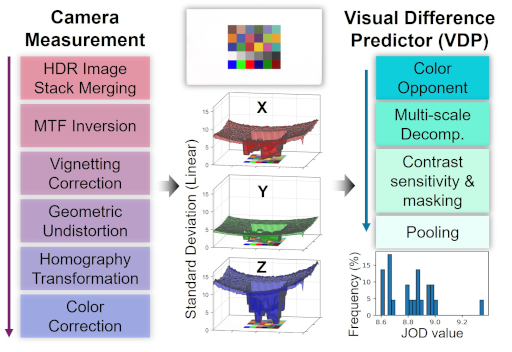

CameraVDP

CameraVDPCameraVDP combines calibrated camera capture with a Visual Difference Predictor to evaluate the visibility of display distortions, such as non-uniformity, color fringing, defective pixels, and others. Our camera calibration employs HDR merging, MTF inversion, vignetting correction, geometric undistortion, homography, and color correction to turn a camera into a measurement instrument.

AR-DAVID

AR-DAVIDAR-DAVID is a video quality dataset that captures how distortions due to display technologies (e.g, waveguide non-uniformity) are going to be seen on an optical see-through AR display. We found a simple blending of the environment and display light cannot predict the visibility of distortions.

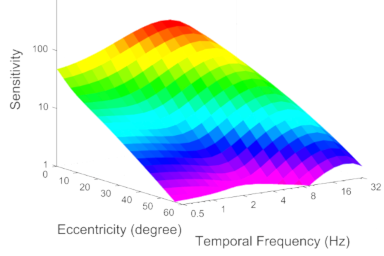

elaTCSF: A Temporal Contrast Sensitivity Function for Flicker Detection and Modeling Variable Refresh Rate Flicker

elaTCSF: A Temporal Contrast Sensitivity Function for Flicker Detection and Modeling Variable Refresh Rate FlickerelaTCSF is a temporal contrast sensitivity model that can predict the visibility of a flicker, such as a flicker found in variable-refresh-rate OLED and LCD displays.

ColorVideoVDP

ColorVideoVDPColorVideoVDP is a differentiable image and video quality metric that models human color and spatiotemporal vision. It is targeted and calibrated to assess image distortions due to AR/VR display technologies and video streaming, and it can handle both SDR and HDR content.

A model of spatio-temporal-chromatic contrast sensitivity that accounts for (c)hromaticity, (a)rea, (s)patial and (t)emporal frequency, (l)uminance and (e)ccentricity.

We reproduce gloss on our ultra-realistic HDR 3D display so that it appears identical to the gloss of real objects seen side by side. Our observation is that the dynamic range, absolute luminance and tone-curve are the factors that influence gloss perception the most.

Spatiotemporal denoising for path tracing relies on a trained multi-scale decomposition (pyramid), which can better preserve details and avoid artifacts of previous methods.

Inaccurate information on exposure times result in banding artifacts when merging a stack of multiple exposures into an HDR image. We show how the exposure times can be robustly estimated from an exposure stack while accounting for camera noise and pixel misalignment.

A contrast sensitivity function of the human visual system has been modeled as the function of spatial and temporal frequency, eccentricity, luminance, and area (stelaCSF). The same model explains the data from 11 contrast sensitivity datasets from the literature and enables applications in foveated rendering, flicker visibility, and other areas.

Low display brightness, which is often desirable in VR and stereo applications, decreases the precision of binocular depth cues. We measure and model this effect and then show how it can be compensated with a simple, real-time, image-space technique to produce preferable and more 3D rendering for low-luminance VR headsets.

Existing protocols for evaluating single-image HDR reconstruction methods are unreliable because of large color and tone differences. We demonstrate that the accuracy of metrics can be improved by correcting for camera-response-curve inversion errors. Still, the metrics can detect only substantial differences, and conducting a controlled experiment is preferable.

Variable Shading Rate (VRS) and refresh rate are optimized using a perceptual motion-quality model to maximize rendering quality for a given rendering budget.

A novel high-dynamic-range multi-focal stereo display reproduces 3D objects with high fidelity so that they are confused with real 3D objects.

FovVideoVDP is an image and video quality metric that is in particular intended for testing the performance of foveated rendering methods. The metric accounts for many aspects of low-level vision, such as luminance, contrast masking, spatio-temporal contrast sensitivity and reduced sensitivity outside the foveal region.

We can train image-to-image networks in a semi-supervised manner with 50% or less of paired data, or we can improve performance using using large quantities of unpaired data. The method works with multiple tasks, including single-image super-resolution, denoising, semantic segmentation, colourization and others.

Is it better to render at 4K or 144Hz? The quality of motion depends on the velocity, the type of eye motion, viewing distance and other factors. We model the influence of all those factors on the perceived quality of motion and use such a model to adaptively select refresh-rate and resolution for rendering.

A luminance and colour contrast sensitivity function has been measured and modelled in the range of luminance from 0.001 cd/m^2 to 10,000 cd/m^2. The new function can predict detection thresholds for three colour directions, a range of frequencies, luminance levels and stimulus sizes.

The perceived contrast in VR/AR or stereoscopic displays is enhanced by showing a slightly modified image to each eye. The effect takes advantage of the binocular fusion mechanism, which shows bias toward the eye that can see a higher contrast.

The method for scaling together the results of rating and pairwise comparison experiments into a unified quality scal and the meaning units of Just Objectionable Differences. The method can be used to combine together existing datasets or to design experiments in which both protocols are combined.

We make generative CNNs produce temporarily coherent video by adding regularization terms to the loss function. The regularization facilitates learning geometric transformations, which should affect the output frame in the same way as the input frame.

Machine-learning-based metric for predicting visible differences between a pair of images. The metric can account for viewing distance and display brightness.

Every second frame of a high-frame animation is rendered at a lower resolution, reducing the number of rendered and transmitted pixels by about 40%. The high quality animation is reconstructed by exploiting the limitations of human spatio-temporal vision.

Visibility of artifacts is marked by a number of observers to create a dataset of local differences. The dataset is then used to retrain existing visibility metrics, such as HDR-VDP-2, and to train a new CNN-based metric.

The largest image quality dataset, TID2013, is rescaled to improve the quality scores. Better quality estimates are obtained using a more rigorous observer model (Thurstone's Case V) and with additional cross-content and with-reference measurements.

Saturated pixels are reconstructed from a single low-dynamic range exposure with the help of a deep convolutional neural network.

We investigate whether 2D quality metrics can predict the distortions that can be found in light field applications.

The report contains a review of recent video tone mapping operators, oulining the most important

trends and characteristics of the proposed methods.

The Luma HDRv is a video codec based on VP9, which uses a perceptually motivated method to encode high dynamic range (HDR) video. Source code avauilable.

Video tone mapping that controls the visibility of the noise, adapts to

display and viewing environment, minimizes contrast distortions,

preserves or enhances image details, and can be run in real-time without preprocessing.

Psychophysical evaluation of several encoding schemes for high dynamic range pixel values.

We investigate what is the likely cause of enhanced appearance on 3D-ness on HDR displays.

The method that can reproduce the appearance of night scenes on bright displays or compensate for night vision on dark displays.

A new data-driven full-reference image quality metric intended for detecting rendering artefacts and their location in an image. The metric utilises a large number of features from quality metrics (SSIM, HDR-VDP-2), computer vision (HOG, BOW) and statistics.

Eleven tone mapping operators are evaluated and compared to investigate the major challenges and problems in video tone mapping.

A number of monocular depth cues are compared in a subjective experiment to evaluate the accuracy of intuitive depth ordering. Contrast and brightness on an HDR display were found to be one of the most effective depth cues when no other depth cues are available.

To efficiently deploy eye-tracking within 3D graphics applications, we present a new probabilistic method that predicts the patterns of user’s eye fixations in animated 3D scenes from noisy eye-tracker data.

The new per-pixel image quality dataset with computer

graphics artifacts shows disapointing performance of both simple

(PSNR, MSE, sCIE-Lab) and advanced (SSIM, MS-SSIM, HDR-VDP-2) quality

metrics.

Unlike standard LCD or Plasma displays, a high dynamic range (HDR) display is capable of showing images of very high contrast (up to 250,000:1) and peak brightness (up to 2,400 cd/m^2). As a result, the images and video shown on such as display look very realistic and appealing.

A metric for predicting visible differences (discrimination) and image quality (mean-opinion-score) in high dynamic range images. The metric is carefully calibrated and extensively tested against actual experimental data, ensuring the highest possible accuracy.

HDR images are captured in a single exposure with standard cameras by encoding information about the saturated pixels in a glare. The glare is produced by a cross-screen (star) filter, making it amenable to tomographic reconstruction.

Digital viewfinder in cameras does not show depth-of-field, lack of sharpness or motion blur, which is normally visible in a full-size image. Based on blur-matching experimental data, the algorithm produces lower resolution images while preserving apperent blur.

A method for displaying HDR images with exposure

control in a web browser. The project page includes a toolkit for

generating web pages with HDR images.

Color distortions due to tone-mapping are

corrected by adjusting image color saturation. A model of color

adjustment is built based on the data from an appearance-matching

experiment.

A tone-mapping algorithm that is controlled by a

super-threshold visual metric. The solution takes into account

display limitations, such as brightness, black level, and reflected

ambient light.

A visual metric that is mostly invariant to contrast and tone-scale manipulations. It can detect loss of visible contrast, amplification of invisible contrast and contrast reversal.

A semi-automatic method for classification of reflective and emissive objects in video, followed by brightness enhancement for display on high dynamic range displays.

A generic model of a local tone-mapping operator is fit to a pair

of LDR and HDR images. The model is applied for backward compatible HDR image

compression, analysis of tone-mapping operators and synthesis of new operators

from existing ones.

The perceived brightness of the glare illusion is measured in a

brightness matching experiment. A simple Gaussian convolution is shown to

produce similar brightness boost as a physically correct PSF model of the eye optics.

For older projects visit the MPI HDR Projects web page or go to the publication list and click on the 'project page' link.