Hand-over-face gestures

Marwa Mahmoud, Tadas Baltrušaitis & Peter Robinson

People often hold their hands near their faces as a gesture in natural conversation. This can often interfere with affective inference from facial expressions. However, these gestures are valuable as an additional channel for multi-modal inference. We have collected a 3D multi-modal corpus of naturally evoked complex mental states, and labelled it using crowd-sourcing. The database will be made available.

Cam3D corpus

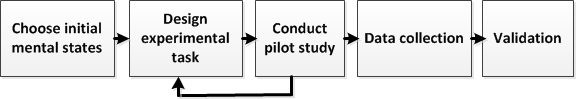

We elicited and recorded complex mental states involving both human-computer and human-human interaction.

Emotion elicitation | |

|

|

|

Data collection setup (human-computer & dyadic) | |

We used three different sensors for data collection: Microsoft Kinect sensors, HD cameras and microphones.

The corpus consists of 108 labelled videos of 12 mental states including spontaneous facial expressions and hand gestures. It was labelled using crowd-sourcing (inter-rater reliability Kappa = 0.45). The on-line annotation system is still running.

|

|

|

Colour image |

Disparity map |

|

|

|

Point cloud visualisation combining colour image and disparity map. | |

The corpus is generally available to interested researchers. To get a copy, please email Marwa Mahmoud or Tadas Baltrušaitis.

Hand-over-face gestures

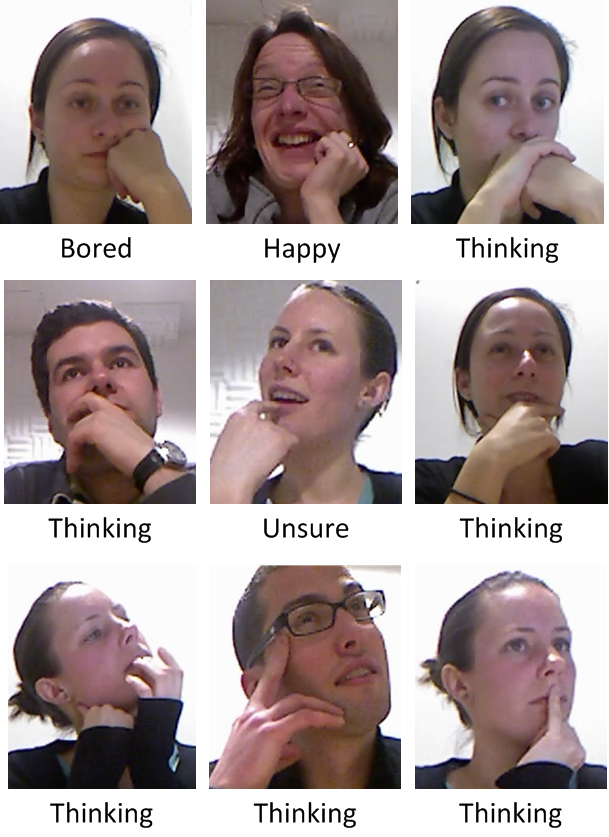

By studying the videos in our corpus, we noticed:

- Spontaneous hand-over-face gestures occur in 16% of HCI and 25% of dyadic interactions.

- Gestures serve as affective cues in cognitive mental states.

|

|

|

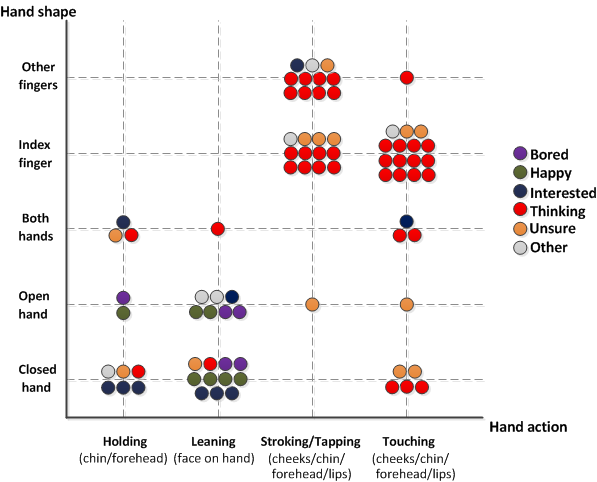

Different hand-over-face shape, action and face region occluded can imply different mental states |

Encoding of hand-over-face shape and action distributed in different mental states. Note the significance of index finger actions in cognitive mental states |

Currently, we are exploring the use of depth data in automatic analysis of facial expressions and hand gestures. We will also expand our corpus to allow further exploration of spontaneous gestures and hand-over-face cues.

Selected publications

- 3D corpus of spontaneous complex mental states

Marwa Mahmoud, Tadas Baltrušaitis, Peter Robinson and Laurel Riek

in International Conference on Affective Computing and Intelligent Interaction (ACII), Memphis, TN, October 2011 in print

-

Interpreting hand-over-face gestures

Marwa Mahmoud and Peter Robinson

in the Doctoral Consortium in International Conference on Affective Computing and Intelligent Interaction (ACII), Memphis, TN, October 2011 in print