Vocal affect inference

Tal Sobol Shikler, Tomas Pfister & Peter Robinson

Recording multi-modal cues for HCI

|

The automated understanding and synthesis of natural expressions in speech is still a major challenge for human-computer interaction and in industries such the computer-generated animation.

The aim of this research is to advance the technology and the knowledge required for implementation of such systems. We have investigated how to infer the emotion and social information from naturally evoked expressions in speech, especially expressions in the context of natural HCI environments, nuanaces and temporal charchteristics of expressions. These can then be integrated into HCI systems and also be used to enhance systems for realistic synthesis.

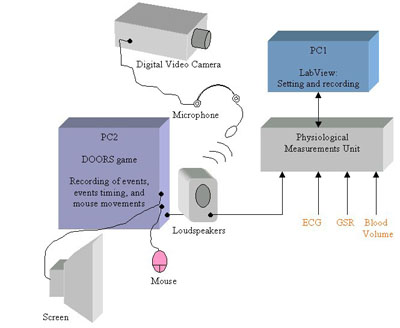

In the context of HCI systems we are also exploring integration with multi-modal systems, including features that we take for granted in human communication such as the context and additional physiological cues.

Selected publications

-

Visualizing dynamic features of expressions in speech

Analysis of affect in speech -

Affect editing in speech

Altering affect in speech -

Classification of complex information: Inference of co-occurring affective states from their expressions in speech

Journal paper describing the overall inference system -

Analysis of affective expression in speech

Tal Sobol Shikler’s PhD dissertation