Affective robotics for autism spectrum conditions

Charles, our android head from Hanson Robotics.

|

Children with autism spectrum conditions (ASC) have difficulty recognizing emotions from facial expressions due to altered face processing, yet successful emotion recognition from faces is an integral part of human social interaction.

Many intervention techniques have been developed to help children with ASC in this area. Among these, robot-based interventions seem to have great potential because they can portray a large repertoire of emotions, they allow the child to work at flexible pace, and they are believed to be intrinsically motivating to children with ASC.

A new intervention

We are proposing a two-stage intervention with our android head Charles, pictured to the right.

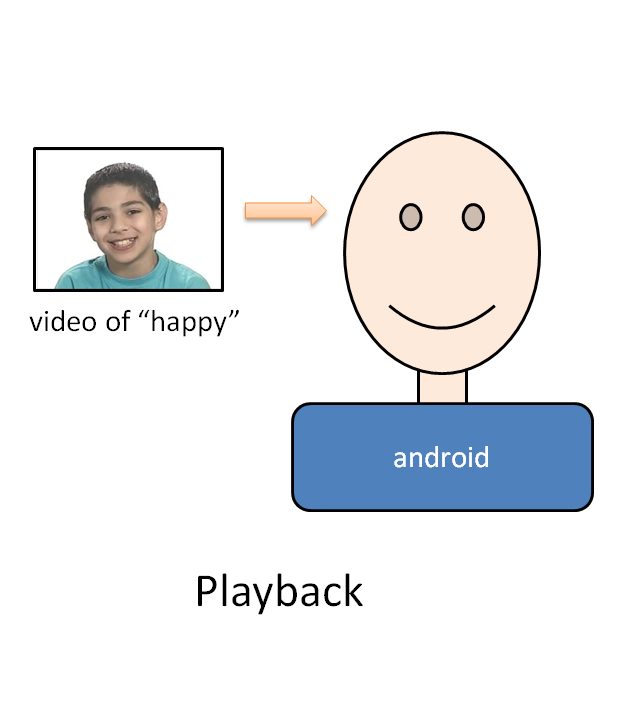

Phase 1: Playback and imitation of facial expressions

During playback, the robot “acts out” the facial expressions of a particular emotion while the child observes. The child can repeat the facial expressions for that emotion as many times as he/she desires.

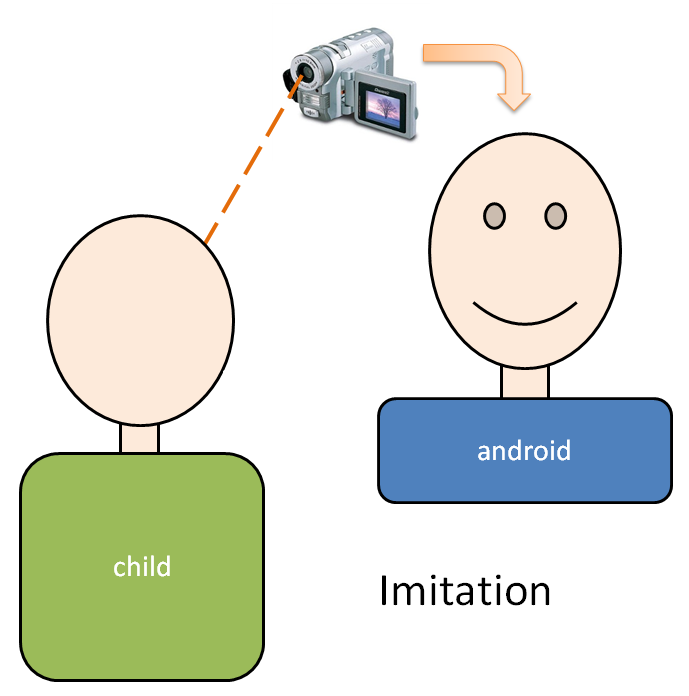

Next, during imitation, the child is asked to reproduce the observed facial expressions on the robot. To control the robot’s motors, the child uses his/her own facial expressions. As shown in the diagram below, there is a camera pointed at the child’s face and the video stream from this camera is processed in real-time to control the robot.

|

|

|

Playback: the robot “acts out” the facial expressions from a given video.

|

Imitation: the child recreates the facial expressions on the robot by controlling it using his/her own face.

|

Imitation plays an important role in cognitive development, for learning social skills and as a form of communication. The first phase of our proposed intervention uses imitation in two ways: firstly, by having the child imitate the facial expressions previously observed on the robot; and secondly, by having the robot controlled by (and therefore mimicking) the facial expressions of the child.

Phase 2: Facial expressions in a social context

Children with ASC often have difficulty generalizing the facial expressions that they learn to recognize in their interventions to real-world social scenarios afterward the intervention. However, situating the facial expressions in the intervention within relevant social contexts seems to improve generalization. Therefore the second phase of our intervention uses the social context of a simple card game to elicit relevant facial expressions on the robot.

Creating realistic expressions on the robot

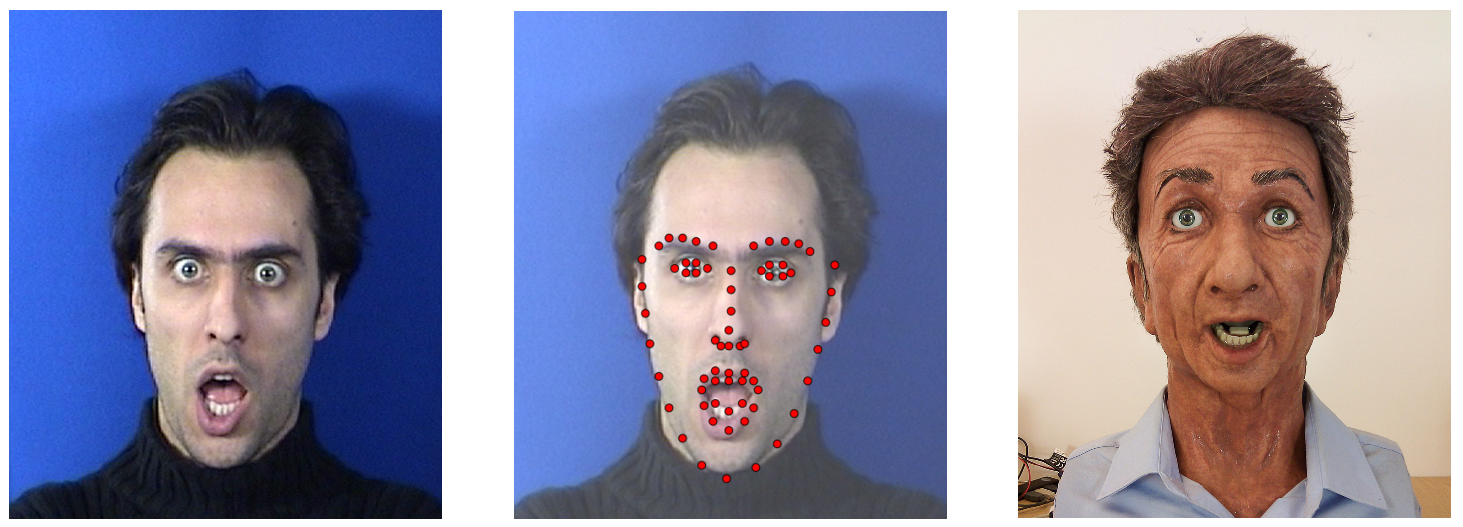

Manually setting motor positions on the robot to create facial expressions to represent particular mental states would be both inefficient and inaccurate. Therefore the robot is instead animated by using facial expressions from videos.

The desired video is given as input to the FaceTracker software (developed at Carnegie Mellon University) which tracks 66 feature points on the face in the video. These points are then converted into motor positions on the robot.

|

|

Videos are processed by the FaceTracker and a set of 66 facial feature points are tracked.

These feature points are then converted into motor positions on the robot. |

Selected publications

- An android head for social-emotional intervention for children with autism spectrum conditions

Andra Adams and Peter Robinson

In the Doctoral Consortium for the International Conference on Affective Computing and Intelligent Interaction (ACII), Memphis, USA, October 9-12, 2011.