Emotionally intelligent interfaces

Andra Adams, Tadas Baltrušaitis, Ntombi Banda, Marwa Mahmoud, Quentin Stafford-Fraser, Erroll Wood, Heng Yang & Peter Robinson

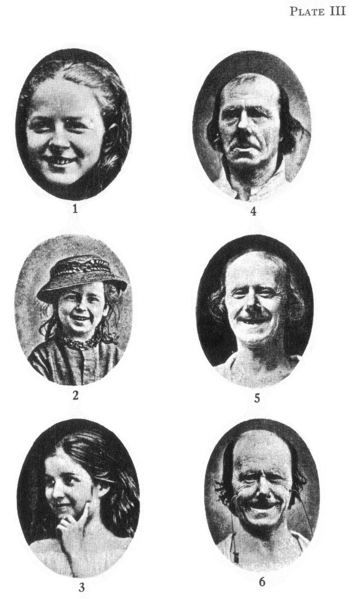

Expressions of emotions [Darwin 1872]

|

With the rapid advances in key computing technologies and the heightened user expectation of computers, the development of socially and emotionally adept technologies is becoming a necessity. This project is investigating the inference of people's mental states from facial expressions, vocal nuances, body posture and gesture, and other physiological signals, and also considering the expression of emotions by robots and cartoon avatars.

Facial expressions provide an important spontaneous channel for the communication of both emotional and social displays. They are used to communicate feelings, show empathy, and acknowledge the actions of other people.

In this research we investigate how facial expression information can be used as part of a wider context to make useful inferences about a user's mental state in a natural computing environment, in such a way that increases usability. We draw inspiration from various emotion theories on the role of facial expressions in inferring mental states, most notably the role of temporal and situational context in the process.

Applications

Testing our inference system has shown the computer to be as accurate as the top 6% of people. But would we want computers that can react to our emotions? Such systems do raise ethical issues: Imagine a computer that could pick the right emotional moment to try to sell you something. There are, however, applications with clear benefits including an emotional hearing aid to assist people with autism, usability testing for software, feedback for on-line teaching, and informing the animation of cartoon figures.

We have been working since 2004 on a wearable system that helps people with Autism Spectrum Conditions and Asperger Syndrome, with emotional-social understanding and mind-reading functions. Rana el Kaliouby, who was awarded a PhD for her work on the project, is currently implementing the first prototype of the system at the Massachusetts Institute of Technology's Media Lab.

Metin Sezgin, joined the team in Cambridge to look at ways of improving the inference of mental states by combining multiple sources of information, including biometric sensors. Tal Sobol-Shikler investigated the effects of emotions on non-verbal cues in speech for her PhD at Cambridge, and is now pursuing the work at Ben-Gurion University. Daniel Bernhardt, another research student, extended the system to recognise further channels of affective communication such as posture and gesture. Shazia Afzal investigated applications of affective inference to support on-line teaching systems, and Laurel Riek looking at the expression of emotions by humanoid robots.

Another important area is discerning drivers' mental states. If a driver gets lost while trying to find a route through an unfamiliar city in heavy traffic, the burden of understanding advice from a navigational system could actually be more of a hindrance than a help. We have been working with a major motor manufacturer on systems to detect when a driver is confused, distracted, drowsy or even upset, and adapt the car's telematic systems accordingly.

Two post-docs and three research students are currently working on affective computing: Tadas Baltrušaitis is considering affect in remote communications, Andra Adams is working on affective robotics for autism spectrum conditions, Ntombi Banda is working on fusion techniques for affective inference, Marwa Mahmoud is working on multi-modal inference of occluded gestures, and Vaiva Imbrasaitė is looking at the effects of music on emotions.

Further information

Please follow the links on the left or below for specific projects:

- Facial affect inference

- Mind-reading machines

- Body movement analysis

- Hand-over-face gestures

- Vocal affect inference

- Affective robotics

- Robots for autism

- Learning and emotions

- Empathic avatars

- Recreating Darwin's emotion experiment

- Helping children with Autism understand and express emotions

Please check the frequently asked questions or contact Peter Robinson for further information.

Videos

- Mind-reading machines

Video made for Royal Society Summer Science Exhibition in 2006 - Interactive control of music using emotional body expressions

Video made for CHI in 2008 - Multi-modal inference for driver-vehicle interaction (2009)

- The emotional computer (2010)

Press coverage

- Sunday Mail report on The bore-ometer (April 2006)

- BBC report on monitoring car drivers (2008)

- Cambridge News (2009)

- University press release for The emotional computer (December 2010)

- The Telegraph (December 2010)

- Athena TV report on affective computing (January 2011, starting about 35 minutes into the video)

- Reuters report on affective computing (February 2011)

- BBC report (March 2011)

- University coverage of the project (March 2011)

- Channel Nine Discovery Channel (May 2011, starting about 5 and a half minutes into the main video)

- RAI Superquark (June 2011, starting at about 1:07:30 into the main programme)

- University coverage of the Darwin project (October 2011)

- Reuters report on the Darwin project (October 2011)

- BBC World Service programme and report (November 2011)

- Anglia news report (March 2012)

- New York Times (October 2012)

- BBC World Service Click (January 2013)

- BBC News article (March 2013)

- Blog about a lecture at the Faraday Institute (March 2013)

- Dara O Briain's Science Club and trail (July 2013)

- Naked Scientists (September 2013)

Other contributors

- Shazia Afzal: Affect inference in learning environments

- Daniel Bernhardt: Emotion inference from human body motion

- Ian Davies,

- Yujian Gao

- Rana el Kaliouby: Mind-reading machines

- Tomas Pfister

- Laurel Riek: Expression synthesis on robots

- Metin Sezgin

- Tal Sobol Shikler: Analysis of affective expression in speech