Computer Laboratory 75th Anniversary

Poster competition

A range of posters showcasing research in the department were displayed throughout our 75th Anniversay Celebrations. There was an opportunity to discuss the researchers’ work informally during the day. This page summarises the posters on display at the event.

All attendees were offered the opportunity to vote on the best poster paper, and prizes were awarded on the basis of the vote. The results were:

- Winner

- Ramsey Faragher, for his poster Opportunistic positioning. He received a signed book and £1000 to spend on research and travel.

- Joint Runners up

- Sergei Skorobogatov, for his poster Silicon scanning reveals hidden backdoors in semiconductor chips; and

Anastasios Noulas with Human urban mobility in location-based social networks: analysis, models and applications.

They each received a book and £500 to spend of research and travel.

Artificial Intelligence Group (hide abstracts)

-

1: Computation in Bacterial Metabolism

1: Computation in Bacterial Metabolism

Claudio Angione

Biologically inspired computation has been recently used with mathematical models towards the design of new synthetic organisms. In a biochemical pathway, an enzyme reads the amount of reactants (substrates) and converts them into products. Therefore, in this work we consider the metabolism as a living computer, and we program it in order to obtain desired outputs. We propose and exploit a mapping between the metabolism and a register machine (RM) to show that bacteria could have computational capability and act as molecular Turing machines (TM). The reactions in the bacterium constitute the increment/decrement instructions, while the registers count the number of molecules of each metabolite. The RM is equivalent to the TM, being a multi-tape TM with the tapes restricted to work as simple counters. We report that the density and gradient of the Pareto curve are useful tools to compare models and understand their structure, while modelling organisms as computers proves useful to carry out computation using biological machines with specific input-output conditions.

View PDF

Computer Architecture Group (hide abstracts)

-

2: Superscalar Performance without Superscalar Overheads

2: Superscalar Performance without Superscalar Overheads

Ali Mustafa Zaidi

With the impending Dark Silicon problem spelling doom for multicore performance scaling, there is an ever increasing need for processor architectures with much better energy efficiency. To address this, designers are increasingly utilising custom (and reconfigurable) computing to provide orders-of-magnitude improvements in energy efficiency over equivalent software implementations of the same code. Unfortunately, there are two key issues with custom computing: (1) when implementing irregular code with complex control-flow, it is often unable to match the performance of conventional processors, and (2) developing custom hardware is far more complex and time-consuming than writing the equivalent software. My proposed research aims to address both of the above issues. By providing a fully automated mechanism for compiling high-level languages to hardware, as well as, improving performance of control-flow intensive code in hardware, I aim to enable much more pervasive utilization of custom and reconfigurable hardware for general-purpose computation, thereby helping to mitigate the effects of dark silicon.

View PDF -

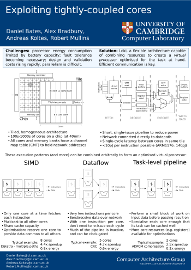

3: Exploiting tightly-coupled cores

3: Exploiting tightly-coupled cores

Daniel Bates, Alex Bradbury, Andreas Koltes, Robert Mullins

Computer architects face many concurrent challenges: they must provide ever-increasing performance with a static power budget and unreliable transistors. Multi-core architectures are now commonplace, but can be difficult to use effectively. I introduce Loki, a homogeneous, flexible, low-power architectures which can use software to combine many cores to produce a virtual processor which is optimised for the task at hand.

View PDF -

4: Analysis of Hybrid Cache Coherence Schemes in Large Multicore Systems

4: Analysis of Hybrid Cache Coherence Schemes in Large Multicore Systems

Alan Mujumdar

Memory management is critical in any computer architecture design. Numerous schemes have been proposed in the past, but few were tested in hardware. We aim to design a hybrid coherence system and implementing it on an FPGA. This hybrid system will blend a local snoopy cache coherence mechanism with a global directory scheme. So far we have succeeded in creating a multi-core system based on the BERI MIPS processor. The system currently operates on a single FPGA and uses a snoopy cache coherence mechanism. We will be extend our design to multiple FPGA's, in the near future.

View PDF -

5: Exploring the Hardware-Software Interface using an FPGA-based Research Platform

5: Exploring the Hardware-Software Interface using an FPGA-based Research Platform

Robert Norton

We present a platform to allow simultaneous development of hardware and software, providing a complete OS stack on readily available and affordable, customisable hardware. Using this platform we are exploring novel CPU features to support fine-grained memory protection, and also support for low-latency communication primitives in multi-core shared memory systems.

View PDF -

6: Bluehive: A Scalable Platform for Million-Scale Real-Time Neural Computation

6: Bluehive: A Scalable Platform for Million-Scale Real-Time Neural Computation

Theo Markettos

Bluehive is a custom 64-FPGA machine targeted at real-time neural computation exceeding one million neurons.

View PDF -

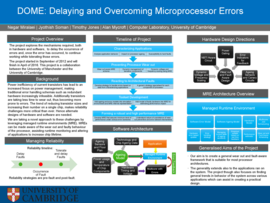

7: DOME: Delaying and Overcoming Microprocessor Errors

7: DOME: Delaying and Overcoming Microprocessor Errors

Jyothish Soman

Power inefficiency of current transistors has lead to an increased focus on power management, making traditional error handling schemas such as redundant hardware increasingly infeasible. Additionally transistors are taking less time to wear out, thus becoming more prone to errors. The trend of reducing transistor sizes and increasing their number on a single chip, makes reliability challenges more critical than ever. Hence alternate designs of hardware and software are needed. We are taking a novel approach to these challenges by leveraging managed runtime environments (MRE). MREs can be made aware of the wear out and faulty behaviour of the processor, assisting runtime monitoring and altering of applications to increase chip lifetime.

View PDF

Digital Technology Group (hide abstracts)

-

8: Scalable Wireless Access Control

8: Scalable Wireless Access Control

Bogdan Roman

While unveiling the iPhone 4 in the summer of 2010, Steve Jobs' demo came to a halt: "There are over 570 WiFi stations operating in this room. We can't deal with that." he said to an audience among which news people and bloggers were sending and streaming info on his presentation. The number and density of wireless devices has exploded. In Manhattan, there have been reports of over 150 WiFi Access Points visible from one flat. APs two feet away are inaccessible due to excessive channel contention, channel capture, hidden nodes, exposed nodes and other effects. Vehicular networks are next, adding high mobility to the mix. We propose a cross-layer scheme called Multi-Carrier Burst Contention, or MCBC, which strives to provide scalability, robustness and high performance in demanding scenarios.

View PDF -

9: Cross-Layer Instrumentation for Smartphones

9: Cross-Layer Instrumentation for Smartphones

James Snee

When testing software, we often see outliers in the experimental results. Investigating these anomalies is difficult given the number of interacting subsystems and layers of indirection that make up modern computer systems. Figure 1 shows a simplified view of a typical, deep-layered system. These layers exist primarily to abstract away the complexity of controlling the hardware directly, therefore providing a simpler programming interface to the user. But all of these interacting layers make it difficult to identify exactly why two executions of the same application may perform differently. We hypothesise that the shared state inherent within the many layers of the system stack, directly affects the behaviour of the running application. We believe that this is important to investigate since many current smartphone software stacks make use of shared services to provide anything from location updates to network state monitoring and that their internal state directly influences the behaviour of the application making use of them. Existing profiling and debugging tools often only provide execution traces at a single layer and at a fixed granularity and therefore aren't able to identify these cross-layer bugs. We propose a method that makes use of cross-layer instrumentation and analysis methods that can identify anomalies in application execution behaviour and allows for an investigation into their origins.

View PDF -

10: Energy Limits in Location Tracking

10: Energy Limits in Location Tracking

Mattias Linnap

Existing work in energy-efficient location tracking uses relative energy use as the main evaluation metric. We argue that comparisons of sensor scheduling algorithms should include the energy limit: the lower bound energy use achievable by any tracker on the same hardware and movement path. The limit is independent of advances in scheduling algorithms, and shows how much future progress is possible in the area. It is also a metric for the inherent energy-efficiency of tracking hardware, and the energy complexity of movement paths.

View PDF -

11: FRESCO: Understanding the Provenance of Data

11: FRESCO: Understanding the Provenance of Data

Lucian Carata

Provenance is still a fledgling field of research in Computer Science. We believe the work of the FRESCO project will result in general purpose systems that allow users to more confidently reason about the sources and accuracy of their data. Our ultimate goal is to make provenance support a fundamental aspect of all general purpose computing systems.

View PDF -

12: Nigori: Secrets in the Cloud

12: Nigori: Secrets in the Cloud

Daniel Thomas

The aim of the Nigori project is to develop a pratical, application neutral, peer-reviewed mechanism for storing sensitive user data in the cloud in such away that the cloud provider and application developer cannot read any of the stored data. Nigori handles the synchronisation of data in a distributed system of devices using untrusted cloud services and local reconciliation using application developer specified logic.

View PDF -

13: Device Analyzer

13: Device Analyzer

Daniel Wagner

Device Analyzer is an application for Android that volunteers install on their devices to contribute usage statistics to our growing database. We then mine this database to extract usage patterns and learn how to improve smartphones in the future.

View PDF -

14: Wireless Communications In Vehicles

14: Wireless Communications In Vehicles

Steven Herbert

Whilst driverless cars and vehicle-to-vehicles communications have been grabbing the headlines recently, a quiet revolution has been going on in the field of communications within in the vehicle itself. The smart-phone generation demand constant access to media, and when in their vehicles this is no different. So called infotainment systems are now commonplace in high-spec modern cars, as well as sophisticated sensor networks to control environmental conditions such as temperature and CO2 levels. With vehicles already creaking under the weight of hundreds of metres of cabling, wireless communications are becoming an increasingly attractive prospect for manufacturers, not to mention having desired property of allowing users to wirelessly connect their smart devices such as tablet computers and phones to the vehicle’s systems. But there is a problem- a good analogy can be made between the radio propagation in a vehicle cavity, and that of a reverberation chamber- a controlled environment designed specifically to make the electric field (and hence wireless communications) as random as possible. How can we deploy effective wireless communication systems in such a harsh radio propagation environment? We assert that the best way to solve this problem is through a bottom up approach- starting with a rigorous analysis of the propagation of electromagnetic waves in the vehicle cavity, then calculating the theoretical information capacity of the in-vehicle channel, and finally using this to deploy communications systems.

View PDF -

15: Unconstrained indoor localisation on a smartphone

15: Unconstrained indoor localisation on a smartphone

Agata Brajdic

Obtaining location inside indoor areas is becoming increasingly important, yet no systems for indoor localisation are readily available. Mobile technology is enabling pervasive localisation, however current approaches often assume existing environmental infrastructure. The goal of my PhD research is to develop an indoor location system for modern smartphones that can localise a user within a few meters, without relying on existing infrastructure. This poster presents a snapshot of two lines of my PhD work. Smartphone pedometry offers the possibility of ubiquitous indoor location tracking through pedestrian dead reckoning. However, there is currently no detailed understanding of how well pedometry works when applied to smartphones in typical use. In the first line of my work, I investigated performance of common step detection algorithms on a large dataset of walk traces obtained from smartphones in different placements, to find suitable candidates for indoor localisation. Particle filters are the only method to date that enables absolute localisation using only relative inertial data. Modern mobile phones however lack processing power to perform such localisation in real time. In the second line of my work, I developed a hybrid particle filter based location system that allows dynamic offloading and loading of the computation to and from a server (cloud). The scalability on the server side has been mitigated by exploiting the latent parallelism in the particle filters algorithm and adapting it for execution on commodity Graphical Processing Units (GPUs).

View PDF -

16: Ubiquitous Outdoor and Indoor Positioning From High Altitude Platforms

16: Ubiquitous Outdoor and Indoor Positioning From High Altitude Platforms

Wafa Isam

If we can locate people and things outdoors and indoors we can build intelligent applications that can make our lives easier, safer and more fun. These applications require different levels of location information accuracy and different context (e.g. coordinate, containment and/or proximity). For example, building an application that transfer landline calls to your mobile requires coordinates or proximity location information, i.e. which base station is the nearest to you. While building an application that switch the air conditioner when you are home requires containment location information, i.e. house level accuracy. This concept is defined by Hopper in [1] in which is known as the Sentient Computing paradigm. Location information is a key element to the actualization of the Sentient Computing paradigm. Up to now there is no technology that can provide location information both indoors and outdoors with a reasonable accuracy and/or reasonable amount of hardware infrastructure and with seamless transition between the outdoor environment and the indoor environment. GPS satellites are at an altitude of 20,200 km , the GPS signal has to traverse the ionosphere where it experiences scintillation effects ; a very difficult time varying phenomenon that is hard to be modelled accurately. Due to this and so many other facts GPS does not work indoors. Can we use pseudo satellites -High Altitude Platforms (HAPs) at an altitude of 20 km to provide positioning information both outdoors and indoors? This altitude is below the ionosphere that the signal will not experience the scintillation. Moreover, the platform will not be subject to weather conditions because it is above the atmosphere where weather exists. These HAPs are more flexible than GPS in terms of deployment and maintenance. But, there is some degree of randomness associated with their position. Our aim is to modell how the uncertainty of reference nodes (HAPs) in the positioning system will affect the accuracy of the positioning system and how we can eliminate this positioning error that is associated with randomness of the HAP position.

View PDF -

17: Opportunistic Positioning

17: Opportunistic Positioning

Ramsey Faragher

We rely heavily on Global Navigation Satellite Systems (GNSS) such as GPS for Aviation, Emergency Services, Defence, Mining, Agriculture, Telecommunications and even Banking. In 2011 it was noted that around €800 billion of the European economy is dependent on GNSS. A study by the Royal Academy of Engineering in March 2011 entitled “Global Navigation Space Systems: reliance and vulnerabilities” provided an examination of society’s strong dependence on GNSS and discussed twenty four failure modes of GNSS systems. This research programme addresses the major concerns of this report by improving the reliability and availability of GNSS, even in indoor environments, without needing new infrastructure.

View PDF

Graphics & Interaction Group (Rainbow) (hide abstracts)

-

18: Can We Augment Reality with “Mental Images”? Pretend Play Elicitation for Children with Autism

18: Can We Augment Reality with “Mental Images”? Pretend Play Elicitation for Children with Autism

Zhen Bai

We investigate the potential of Augmented Reality (AR) as a specific external representation to stimulate internal mental imagery and play activities involving pretense for young children with autism.

View PDF -

19: Computational Thinking in Multiple Representations

19: Computational Thinking in Multiple Representations

Alistair Stead

Teachers currently use several representations, over different types of media, to teach students Computational Thinking concepts. Our goal is to create a single system that can support students to acquire these skills, while reducing barriers to programming.

View PDF -

20: Cornsweet Surfaces for Selective Contrast Enhancement

20: Cornsweet Surfaces for Selective Contrast Enhancement

Jiri Kosinka

The contrast of photographs or rendered images is commonly enhanced to increase the image’s visual appeal. Normal computer science approaches take no account of image content. In artwork, spatial configurations are use to increase apparent contrast. We have a spatially aware contrast enhancement algorithm, which uses image structure to hide the countershading profiles. We can achieve enhancements that better match the countershading used by artists, with no loss of contrast compared to traditional contrast enhancement techniques.

View PDF -

21: Emotional investment in naturalistic data collection

21: Emotional investment in naturalistic data collection

Ian Davies

We present results of two experiments for naturalistic data collection of the physiological effects of cognitive load in command and control. In addition to traditional driving simulation, we propose a remote-control task which allows experimental subjects to remain in a laboratory setting, performing a real-world task in a completely controlled environment.

View PDF -

22: 3D Constrained Local Model for Facial Tracking

22: 3D Constrained Local Model for Facial Tracking

Tadas Baltrusaitis

Facial expression and head pose are rich sources of information which provide an important communication channel for interaction. A crucial initial step in many affect sensing, face recognition and human behaviour understanding systems is the estimation of head pose and tracking of facial feature points. The availability of accurate and affordable depth cameras leads us to explore this new channel for facial tracking.

View PDF -

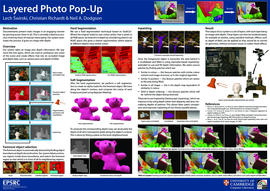

23: Layered Photo Pop-Up

23: Layered Photo Pop-Up

Lech Swirski

We present a system for segmenting images with depth into a layered representation. This layered representation can be used to synthesise new views of the scene and create effects, such as camera pans and depth-of-field, that rely on occluded image and depth data. We extract layers, and fill the holes they leave, using novel extensions of both GrabCut and exemplar-based inpainting. These extensions allow the algorithms to work on images with depth and use the depth information to improve the algorithms’ accuracy. We also introduce a new method for automatically selecting foremost elements in an image, enabling us to extract them in front-to-back order. We describe a mesh-based real-time renderer, optimised for rendering our layered images with depth.

View PDF -

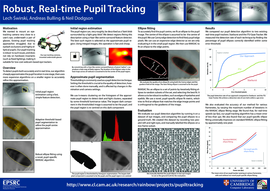

24: Robust, Real-Time Pupil Tracking

24: Robust, Real-Time Pupil Tracking

Lech Swirski

Robust, accurate, real-time pupil tracking is a key component for online gaze estimation. On head-mounted eye trackers, existing algorithms that rely on circular pupils or contiguous pupil regions fail to detect or accurately track the pupil. This is because the pupil ellipse is often highly eccentric and partially occluded by eyelashes. We present a novel, real-time dark-pupil tracking algorithm that is robust under such conditions. Our approach uses a Haar-like feature detector to roughly estimate the pupil location, performs a k-means segmentation on the surrounding region to refine the pupil centre, and fits an ellipse to the pupil using a novel image-aware Random Sample Concensus (RANSAC) ellipse fitting. We compare our approach against existing real-time pupil tracking implementations, using a set of manually labelled infra-red dark-pupil eye images. We show that our technique has a higher pupil detection rate and greater pupil tracking accuracy.

View PDF

Natural Language and Information Processing Group (hide abstracts)

-

25: Learning a Theory of Marriage (and other relations) from a Web Corpus

25: Learning a Theory of Marriage (and other relations) from a Web Corpus

Sandro Bauer

We use a large-scale knowledge base (Freebase) and a Web-size corpus (ClueWeb09) to learn more detailed representations of real-world concepts such as "marriage" or "working for a company" -- or, concretely, collections of related relations which people associate with these concepts. We use a weighting scheme to give more importance to dependency graph snippets which mostly occur with the concept in question.

View PDF -

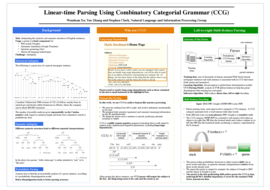

26: Linear-time Parsing Using Combinatory Categorial Grammar (CCG)

26: Linear-time Parsing Using Combinatory Categorial Grammar (CCG)

Wenduan Xu

Natural language parsers are at the core for almost all human language technologies. However, compared with programming language compilers which are able to parse deterministically in linear time, natural language parsers are often not accurate nor fast enough for web-scale parsing, especially when deep syntactic/semantic structures are needed. We present an efficient and accurate linear-time parser for English, enabling left-to-right, incremental, integrated syntactic/semantic interpretations. Our parser uses probabilistic Combinatory Categorial Grammar (CCG) and is trained with a global discriminative model. CCG is well-suited to capture a wide range of linguistic phenomena, including coordination, extraction and arguably it provides the most linguistically satisfactory account of long-range and unbounded dependency recovery from these phenomena. Combining the strengths of CCG and a rich discriminative model, our parser is the best-performing shift-reduce parser for CCG to date, achieving 85.96% labelled dependency F-score for the standard Wall Street Journal test data.

View PDF -

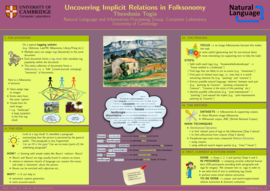

27: Uncovering Implicit Relations in Folksonomy

27: Uncovering Implicit Relations in Folksonomy

Theodosia Togia

In social tagging systems, annotation of a digital document, such as a picture, is often performed by more than one user, resulting in a multi-set of keywords that collectively 'describe' this item. Given the expectation to assign individual labels to documents, as opposed to coherent text, users are forced to omit much of the language they would normally have used to describe the document. Recovering some of this lost natural language can start in the form of tag(s)--language--tag(s) triples, for example, 'woman'--'with a'--'hat', where the natural language relation 'with a' is extracted between the tags 'woman' and 'hat'. This poster presents some ongoing work, which can ultimately aid in advanced search on tagging websites and automatic comment generation for labelled digital items.

View PDF -

28: An investigation of imitation learning algorithms for structured prediction

28: An investigation of imitation learning algorithms for structured prediction

Andreas Vlachos

In the imitation learning paradigm algorithms learn from expert demonstrations, thus relieving practicioners from having to specify a reward function. Daume et al. (2009) framed structured prediction in this paradigm and developed the search-based structured prediction algorithm (SEARN) which has been applied successfully to various natural language processing tasks with state-of-the-art performance. Recently, Ross et al. (2011) proposed the dataset aggregation algorithm (DAgger) and evaluated it in sequential prediction problems. In this paper, we compare these two algorithms in the context of a complex structured prediction task, namely biomedical event extraction. We demonstrate that DAgger has more stable performance and faster learning than SEARN, and that these advantages are more pronounced in the parameter-free versions of the algorithms.

View PDF -

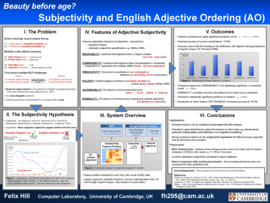

29: Beauty Before Age?: Applying Subjectivity Analysis to English Adjective Ordering

29: Beauty Before Age?: Applying Subjectivity Analysis to English Adjective Ordering

Felix Hill

The preferred order of pre-nominal adjectives in English is determined primarily by semantic factors. Nevertheless, Adjective Ordering (AO) systems do not generally exploit semantic features. This paper describes a system that orders adjectives with significantly above chance accuracy solely on the basis of semantic features pertaining to the cognitive/semantic dimension of subjectivity. The results show that integrating such semantic approaches into current methods could result in more accurate and robust AO systems.

View PDF

Programming, Logic, and Semantics Group (hide abstracts)

-

30: Coeffects: Programming languages for rich environments

30: Coeffects: Programming languages for rich environments

Tomas Petricek, Dominic Orchard

Applications today run in diverse environments, such as mobile phones or the cloud. Different environments provide different capabilities, data with meta-data and other resources. Applications access information and resources of the environment. Such context-dependent interactions are often more important than how the application affects or changes the environment. Tracking and verifying how computations affect the environment can be done in a unified way using monadic effect systems, but no such mechanism exists for tracking and verifying how computations access and rely on the context.

View PDF -

31: Aliasing Contracts - Untangling Spaghetti References

31: Aliasing Contracts - Untangling Spaghetti References

Janina Voigt

In modern object-oriented programming, a variable stores a reference to an object, rather than the object itself. Therefore multiple variables can point to the same object; we call this aliasing. Aliasing causes problems because if two variables unintentionally point to the same memory location, modifying one will affect the other. This is a common cause of bugs. We propose aliasing contracts to mitigate the effects of aliasing, by allowing developers to specify under which circumstances an object should be accessible. This helps protect it from unexpected accesses through other aliases.

View PDF -

32: Formal Verification of Machine Code

32: Formal Verification of Machine Code

Anthony Fox

This poster presents our research on the formal verification of machine code programs. This research has applications in computer security and safety-critical systems, where there is a need to rigorously prove that software is bug free and trustworthy. We use the HOL4 interactive theorem prover for this work and have also developed a custom instructions set specification language called L3. We have used L3 to model the two of the most popular computer architectures: ARM and x86.

View PDF -

33: Burden of proof

33: Burden of proof

Nik Sultana

The burden of checking mathematical proofs has been relieved slightly by using computers. Further gains are achieved by using computers to find, as well as check, proofs for increasingly complex theorems.

View PDF -

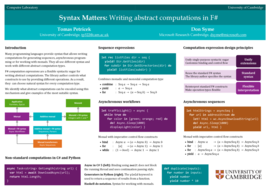

34: Syntax Matters: Writing abstract computations in F#

34: Syntax Matters: Writing abstract computations in F#

Tomas Petricek

Many programming languages provide syntax that allows writing computations for generating sequences, asynchronous programming or for working with monads. They all use different syntax and work with different abstract computation types. F# computation expressions are a flexible syntactic sugar for writing abstract computations. The library author controls what constructs to use by providing different operations. As a result, they can choose natural syntax for every computation type. We identify what abstract computations can be encoded using this mechanism and give examples of the most suitable syntax.

View PDF -

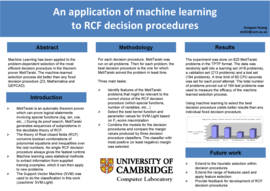

35: An application of machine learning to RCF decision procedures

35: An application of machine learning to RCF decision procedures

Zongyan Huang

Machine Learning has been applied to the problem-dependent selection of the most efficient decision procedure in the theorem prover MetiTarski. The machine-learned selection process did better than any fixed decision procedure (Z3, Mathematica and QEPCAD).

View PDF

Security Group (hide abstracts)

-

36: Privacy Leak in Egonets

36: Privacy Leak in Egonets

Kumar Sharad and George Danezis

A major Telecom released anonymized communication sub-graphs of a few thousand randomly selected subscribers for scientific analysis. To obfuscate the interactions between the sub-graphs the common nodes were renamed. However, this is not enough to preserve privacy.

View PDF -

37: Cross-Language Interoperability for Secure, Fast, Easy, and Maintainable Code

37: Cross-Language Interoperability for Secure, Fast, Easy, and Maintainable Code

David Chisnall

This poster gives an overview of a body of work related to close interoperability between programming languages. This is important as different programming languages have different trades in the space between performance and concise expression. Low-level languages make it easy to directly access hardware features, high-level languages have additional safety properties, and domain-specific languages allow very dense code within a specific problem domain. This research encompasses three problems. The first is psychological: How do we minimise the cognitive overhead for a programmer working in multiple languages? The second relates to performance. We must ensure that the cost of using a high-level language is sufficiently low (at the very least, within an order of magnitude of a low-level language) that low-level languages are only used for performance-critical segments. More importantly, we must ensure that the cost of bridging is not so high that the gains of using a low-level language for a performance-critical segment are not lost at the language barrier. Finally, we want to preserve the safety properties that make high-level languages attractive from a security perspective, even when they are sharing an address space with code written in unsafe languages such as C. We have explored all aspects of this problem, with an experimental framework based on LLVM allowing multiple languages to share the same underlying object model, with easily-extensible syntax, and a new hardware architecture with an object-capability memory model.

View PDF -

38: Analysis and decryption of Apple's FileVault 2

38: Analysis and decryption of Apple's FileVault 2

Omar Choudary

With the launch of Mac OS X 10.7 (Lion), Apple has introduced a volume encryption mechanism known as FileVault 2. In this work we provide the first publicly available security evaluation and detailed description of this system. We performed an extensive analysis of FileVault 2 and we identified the algorithms and data structures needed to successfully read an encrypted volume. Based on this analysis we have developed an open-source library, named libfvde, that can decrypt and mount volumes encrypted with FileVault 2. This tool can be used to perform forensic investigations on FileVault 2 encrypted volumes. We also present a security evaluation of the system and comment on some of the design and implementation features, including the random number generator used to derive the recovery password. During our evaluation we discovered that part of the user data was left unencrypted and we reported this to Apple, who fixed the issue in the following OS update.

View PDF -

39: Silicon scanning reveals hidden backdoors in semiconductor chips

39: Silicon scanning reveals hidden backdoors in semiconductor chips

Sergei Skorobogatov

With the globalisation of semiconductor manufacturing, integrated circuits become vulnerable to malevolent activities in the form of Trojan and backdoor insertion. Having a security related backdoor on a silicon chip jeopardises any efforts of adding software level protection. We demonstrated how a deliberately inserted backdoor can be found in the ‘highly secure’ FPGA chip used in both military and sensitive industrial applications.

View PDF

Systems Research Group (hide abstracts)

-

40: The Fountain of Knowledge

40: The Fountain of Knowledge

Toby Moncaster

Storage has been the poor relation of the data centre research family. For years the approach has been to rely on local storage, moving the work to the data rather than the data to the work. However, recent advances in data centre networking have opened up new opportunities for a more flexible approach. This poster presents a new approach, applying the concept of a digital fountains to the problem of data centre storage. This is only a preliminary idea so far but it looks like it offers some desirable properties.

View PDF -

41: NetFPGA - Open Source Network Hardware

41: NetFPGA - Open Source Network Hardware

Neelakandan Manihatty-Bojan, Georgina Kalogeridou, Andrew Moore

The NetFPGA project provides a flexible teaching and research tool - permitting instrumentation and prototyping of hardware-accelerated networking systems running at line rate.

View PDF -

42: Power Optimized 10Gbps Optical Transceivers

42: Power Optimized 10Gbps Optical Transceivers

Yury Audzevich

Power consumption and energy proportionality of networking equipment are under increasing scrutiny. To better understand the impact of different transmission codecs on the power characteristics of the standard 10Gbps optical transceivers, two distinctive physical layer designs have been analysed. We have created a library of transceiver circuits in 45 nm CMOS standard and estimated power consumption using representative Ethernet traces with digital synthesis and spice tools. Based on this study, we propose a burst mode physical layer protocol suitable for power gated or optically switched links, which might not only provide sufficient synchronization time but also reduce the power footprint during the periods of inactivity.

View PDF -

43: The Game-Theory of Technology Use

43: The Game-Theory of Technology Use

Andre Ribeiro

Technology and media as instruments for cooperation and competition. What problems groups solve with them, and how they can improve. New models for GUI, Website and Knowledge Repository use.

View PDF -

44: A scalable emulation framework for Software Defined Networks in Data Centres

44: A scalable emulation framework for Software Defined Networks in Data Centres

Dimosthenis Pediaditakis

We present the design of an emulation framework aiming to facilitate the early-stage evaluation of large-scale SDN deployments in virtualised data centre environments. Our approach builds on top of two of the most popular virtualisation tools, Xen Server and OpenVSwitch. The user describes a network topology via a custom language which provides basic components like switches, hosts, virtualised guests and OpenFlow controllers. Given an emulation scenario that specifies workloads and virtual machine migrations, the emulator automatically builds, runs and assesses SDN's performance.

View PDF -

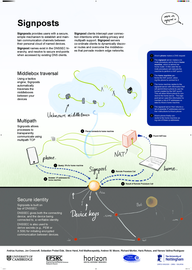

45: Signposts: End-to-End Networking in a World of Middleboxes

45: Signposts: End-to-End Networking in a World of Middleboxes

Haris Rotsos

Signposts provides users with a secure, simple mechanism to establish and maintain communication channels between their personal cloud of named devices. Signpost names exist in the DNSSEC hierarchy, and resolve to secure end-points when accessed by existing DNS clients. Signpost clients intercept user connection intentions while adding privacy and multipath support. Signpost servers co-ordinate clients to dynamically discover routes and overcome the middleboxes that pervade modern edge networks.

View PDF -

46: Evolving TCP. How hard can it be?

46: Evolving TCP. How hard can it be?

Toby Moncaster

TCP has long been the dominant transport protocol in the Internet. As applications have evolved and the speed of connections has increased, TCP has become the jack of all trades but master of none. The problem is that middleboxes hard wire assumptions about the nature of traffic traversing them. This poster presents a new approach, Polyversal TCP. PVTCP builds on Multipath TCP, where a single TCP connection can be spread among a number of sub flows. But in PVTCP these sub flows are no longer limited to following TCP semantics, offering a much more flexible protocol that can leverage the full capabilities of the network while maintaining the reliability and graceful failure model of TCP.

View PDF -

47: Reconfigurable Middleware for Future Care Environments

47: Reconfigurable Middleware for Future Care Environments

Jatinder Singh

Future healthcare services will require the coordination of a range of different devices, applications, users and services. We present a reconfigurable middleware to enable the vision of pervasive healthcare, through the dynamic management of component interactions.

View PDF -

48: uvNIC: Rapid Prototyping Network Interface Controller Device Drivers

48: uvNIC: Rapid Prototyping Network Interface Controller Device Drivers

Matthew P. Grosvenor

Traditional approaches to NIC driver design focus on commodity network hardware, which exhibit slow moving feature sets and long product life cycles. The introduction of FPGA based network adapters such as [1][2] alter the status-quo considerably. Whereas traditional ASIC based NICs may undergo minor driver interface revisions over a timespan of years, FPGA based NIC interfaces can be totally reimplemented in months or even weeks. To the driver developer this presents a considerable challenge: Driver development cannot seriously begin without hardware support, but is now expected to take place simultaneously with hardware development. To solve this problem, I present the userspace, virtual NIC framework (uvNIC). uvNIC implements a custom virtual NIC as a standard userspace application. To the driver developer, it presents a functional equivalent to a physical device. Only minor modifications are required to switch a uvNIC enabled driver over to operating on real hardware. To the hardware designer, uvNIC presents a rapid prototyping environment for initial specifications and a fully functional model against which HDL code can later be verified.

View PDF -

49: Omega: flexible, scalable schedulers for large compute clusters

49: Omega: flexible, scalable schedulers for large compute clusters

Malte Schwarzkopf

Increasing scale and the need for rapid response to changing requirements are hard to meet with current monolithic cluster scheduler architectures. This restricts the rate at which new features can be deployed, decreases efficiency and utilization, and will eventually limit cluster growth. We present a novel approach to address these needs using parallelism, shared state, and lock-free optimistic concurrency control. We compare this approach to existing cluster scheduler designs, evaluate how much interference between schedulers occurs and how much it matters in practice, present some techniques to alleviate it, and finally discuss a use case highlighting the advantages of our approach – all driven by real-life Google production workloads.

View PDF -

50: War of the Worlds: Branch Consistency in Distributed Systems

50: War of the Worlds: Branch Consistency in Distributed Systems

Natacha Crooks

As distribution and concurrency continue to increase, developers have been presented with an increasingly complex task: how can one guarantee good performance while providing users with the illusion of a serializable and totally ordered system. This is the wrong approach. It is unrealistic to expect that a unique system view could, or even should exist at any given time. Distributed systems fundamentally consist of multiple independent executions. User decisions were made, on a single path, and in isolation. This should be reflected in the storage system. I define a model, branch consistency, which brings universes, and not simply objects to the forefront. Branches or universes are now first-class primitives of any storage engine and accurately represent the distributed reality. This model is transactional, completely lock-free and never forces merging of branches, which is left as an application-choice. The model further makes no assumption about the operations or data accessed.

View PDF -

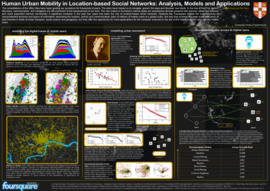

51: Human Urban Mobility in Location-based Social Networks: Analysis, Models and Applications

51: Human Urban Mobility in Location-based Social Networks: Analysis, Models and Applications

Anastasios Noulas

The constellations of the Milky Way have been guiding our ancestors for thousands of years. The stars have helped us to navigate, govern the seas and discover new lands. In the rise of the 21st century discovery, communication and navigation are still prominent to the advancement of our kind. The new means to humanity’s eternal goals are smartphone devices powered with sensors, advanced software and cloud supported internet connectivity. A significant evolution in the past four years has been the rise of location-based social networks. Systems like Foursquare enable the crowdsourcing of unprecedented amounts and layers of information describing the location, activity and communication traits of millions of mobile users at a global scale. Not only they promise the large scale evaluation of past theories in fields of urban transport, social science and geography, but they offer the opportunity for novel applications for the computer sciences. In the era of the mobile web, the stars are the mobile users.

View PDF -

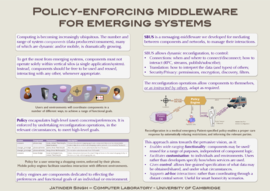

52: Policy-enforcing middleware for emerging systems

52: Policy-enforcing middleware for emerging systems

Jatinder Singh

As computing becomes ubiquitous, more and more system components will need to interact, to provide a range of different services/functionality – some of which not yet envisaged. This poster presents SBUS, a reconfigurable middleware that enables policy to drive system interactions. SBUS facilitates the dynamic use/reuse of system components for a number of purposes, thus enabling a wide-range of functionality; while providing the ability for customisation, personalisation and control.

View PDF