uvNIC: The Userspace Virtual Network Interface Controler

Matthew P. Grosvenor

Contents |

Links

- uvNIC: Presentation Slides (MSN 2012) (Winner: 2012 Brendon Murphy Best Young Researcher Award)

- uvNIC: Extended Abstract (SICOMM 2012)

- uvNIC: Poster (SICOMM 2012)

Introduction

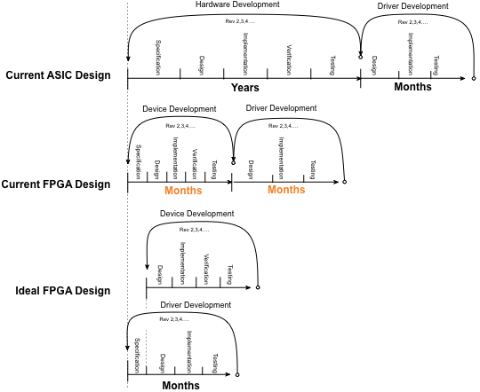

uvNIC is a collection of libraries designed to facilitate rapidly prototyping new network interface controllers (NICs) and their device drivers. The uvNIC project stems from a realisation that programable fabric enabled network cards have lead to a dramatic reduction in the cycle time from idea to fully realised hardware. In times gone by, the development of an updated network device would take years. In particular, the production of a new or updated application specific integrated circuit (ASIC) is and expensive and labour intensive process. By contrast, programable fabric network controllers enabled with Field Programmable Gate Arrays (FPGAs) can implement almost any piece of networking equipment with a relatively short development cycle. A skilled hardware engineer will take months or even weeks (instead of years) to produce a new or updated device. In this environment it is ideal to have the driver developer working in parallel with the hardware developer to further minimise the development cycle. This of course is problematic; how can a driver developer write a device driver for a piece of hardware that hasn’t yet been invented? This is the explicit aim of uvNIC.

The Ideal uvNIC Development Cycle

Using the uvNIC development tools, hardware and software engineers work together to produce an executable specification of the new network hardware. This specification may be in C, C++, Python, Haskell, OCaml or any language capable operating on memory mapped files in a Unix like operating system. The purpose of this phase is twofold, firstly, to produce a useful specification of the interface between the hardware and software. For instance, the control register layout, DMA mechanisms, interrupt strategy and so forth. Secondly, this phase leads to a testable application for both the software and hardware engineers. Hardware engineers feed test bench stimuli into the specification application to produce results against which hardware simulations can be compared. Software engineers use the uvNIC tools to build a real device driver and test it against against the virtual hardware device application. It is important to note that the virtual hardware application is fully functional. It forwards real packets through a raw socket. This gives an unprecedented ability to test and inspect a design before the hardware is even nearly complete.

Extra Benefits

Whilst the expected development cycle above is focussed on engineering new hardware, uvNIC affords developers many other benefits.

Full System Simulation

Test bench suits provide a hardware engineers with a range of “pre-canned” test scenarios to test their new hardware in simulation environment. The problem is that these suites often lack any connection to reality. Important cases will almost certainly be missed. Using the uvNIC toolset a hardware engineer can replace the C specification of the virtual hardware with a fully fledged hardware description language (HDL) simulator. In this scenario the simulated hardware can then be tested against real traffic flows validating the design before it ever goes near silicon.

Automated Hardware Variant Regression Testing

Over the lifetime of a typical commercial network controller, an extensive variety of minor hardware variants are produced. Production drivers are littered with exceptions and special cases relating to minor revisions of the chipsets. Unsurprisingly driver updates are therefore problematic. In the best case, driver developers will laboriously test the driver against every variant of the hardware. A time consuming process involving multiple reboots of the hardware. In the worst case, the driver developer will test an update against one variant and simply hope the others continue to work. Using the uvNIC toolset a new approach is possible. Hardware variants can be programmed into to the userspace virtual NIC application, and regression tests can then be automatically performed against a raft of hardware variations. Tests can also be performed against static test suites and real internet traffic, hopefully leading to better overall test coverage.

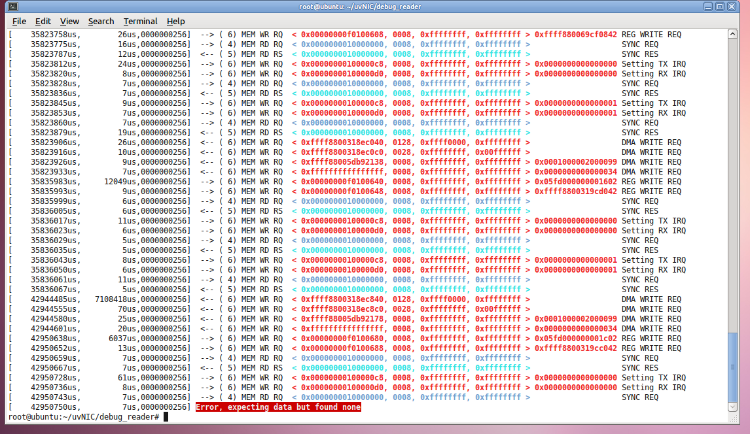

Free PCIe Capture Tool

At the lowest layer uvNIC captures PCI traffic and redirects it from the real hardware. It is trivial to attach the uvNIC PCI layer (uvPCI) to a driver and to thereby capture the transactions that it produces. This produces a PCI capture trace usually only available to people with several thousand dollar test equipment. This capture may even be run live against a real hardware implementation to validate its operation.

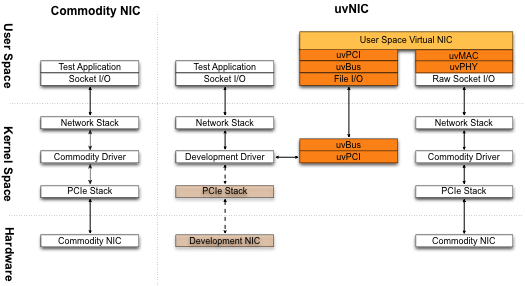

How Does it Work?

Typical NIC device drivers implement two interfaces; a device facing PCI interface and kernel facing network stack interface. Ordinarily, a device driver would send/receive packets by interacting with real hardware over the PCI interface. Instead of (or addition to) regular PCI operations, uvNIC forwards interactions with hardware to the uvNIC virtual NIC application. This application implements a software emulation of the hardware NIC and responds appropriately by sending and receiving packets over a commodity device operated in raw socket mode.

Implementing the uvNIC PCI virtualisation layer is not trivial. OS kernels are designed with strict one way dependencies. That is, userspace applications are dependent on the kernel, the kernel is dependent on the hardware. Importantly, the kernel is not designed for, nor does it easily facilitate dependence on userspace applications. For the uvNIC framework, this is problematic. The virtual NIC should appear to the driver as a hardware device, but to the kernel it appears as a userspace application.

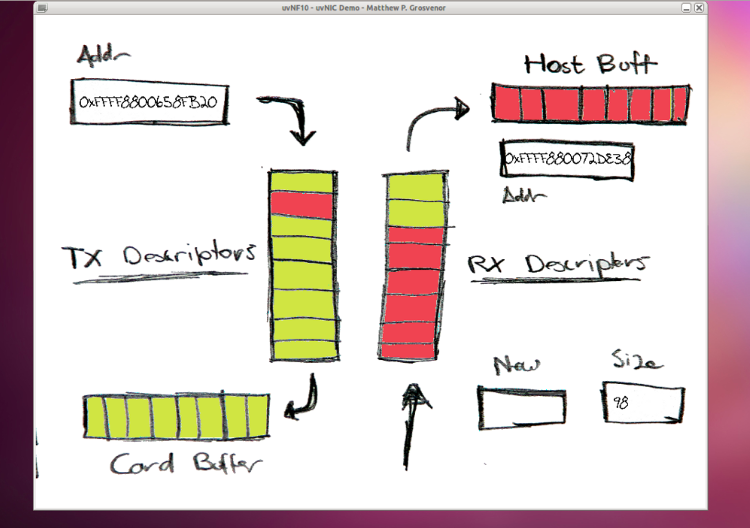

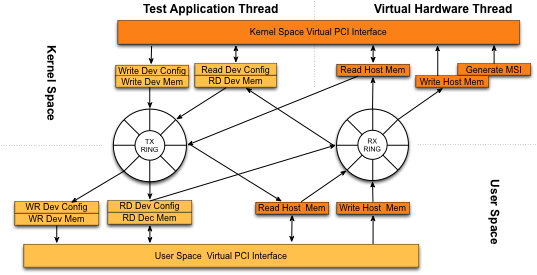

Figure 1. illustrates the uvNIC implementation in detail. At the core is a message transport layer (uvBus) that connects the kernel and the virtual device. uvBus uses file I/O operations (open(), ioctl(), mmap()) to establish shared memory regions between the kernel and userspace. Messages are exchanged by enqueuing and dequeueing fixed size packets into lockless circular buffers. Message delivery order is strictly maintained. uvBus also includes an out of band, bi-directional signalling mechanism for alerting message consumers about incoming data. Userspace applications signal the kernel by calling write() with a 64 bit signal value, likewise, the kernel signals userspace by providing a 64 bit response to poll()/read()system calls.

A lightweight PCIe like protocol (uvPCI) is implemented on top of uvBus. uvPCI implements posted (non-blocking) write and non-posted (blocking) read operations in both kernel and userspace. In kernel space, non-posted reads are implemented by spinning and kept safe with timeouts and appropriate calls to yield(). An important aspect of uvPCI is that it maintains read and write message ordering in a manner that is consistent with hardware PCIe implementations.

In addition to basic PCI read and write operations, uvPCI implements x86 specific PCIe restrictions such as 64 bit register reads/writes, message signalled interrupt generation and 128B, 32bit aligned DMA operations. DMA operations appear to the driver as they would in reality. That is, data appears in DMA mapped buffers asynchronously without the driver’s direct involvement. In practice, uvPCI implements a functionally equivalent, parallel implementation of the PCI stack. Driver writers perform little more than a search/replace and a recompile to switch over to using the real PCI stack.

Results

Work on and with uvNIC is ongoing. Several uvNIC virtual network devices has been implemented and tested on Linux hosts.

Simple 2 Descriptor Test

In the first instance, a simple 1 packet at a time network device was written. The device had 1 descriptor slot for each transmit and receive directions. Necessarily, the device produced a large amount of virtual bus traffic. However the design kept both the driver and virtual hardware simple. This facilitated testing of the concept. Simple networking tests such as ping were used to validate that packet flows in both directions were operational.

Simple Hardware Test

AuvNIC driver was written for and tested against a NetFGPA 10G which was running a simple register and interrupter interface firmware module. This test confirmed that the uvNIC framework is capable of writing simple device drivers that are portable to real hardware platforms. The hardware on the NetFPGA did not support full packet transmission.

Fully Featured NetFPGA Driver

To stress the system, a fully featured NetFPGA 10G driver was back-ported onto the uvNIC framework. This, very comprehensive test, had two aims. Firstly it showed that the uvNIC toolset was fully featured enough to support a production driver. Secondly, it demonstrated that the concept actually worked. Real ssh tunnels, web-browsers and other standard network tools were tested against it. As an example, the following GUI was produced to visualise the internals of the virtual NIC whilst in operation