Systems Research Group – NetOS

Projects

|

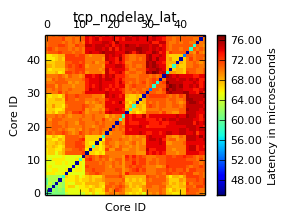

ipc-bench: A UNIX inter-process communication benchmark and results |

|

N4D: Networks for Development |

|

HAT: Hub of All Things |

|

|

REMS: Rigorous Engineering of Mainstream Systems |

|

METRICS: |

|

OCamlLabs: |

|

Trilogy2: |

|

Horizon: the Digital Economy Hub |

|

UCN: Leveraging user data to deliver novel content recommendation systems and content delivery frameworks. |

|

NAAS: Enabling tenants to customise processing of their datacenter traffic in hardware and software. |

|

Q-Sense: The Q Sense project is a collaboration between the Computer Laboratory (Dr. Neal Lathia and Prof. Cecilia Mascolo) and the Department of Public Health and Primary Care (Dr. Felix Naughton and Prof. Stephen Sutton) at the University of Cambridge. Funded by the Medical Research Council, the goal is to develop and refine a novel smoking cessation smartphone sensing app which delivers behavioural support triggered by real-time events.

|

|

Lasagne EU: The aim of the LASAGNE project is to provide a novel and coherent theoretical framework for analysing and modelling dynamic and multi-layer networks in terms of multi-graphs embedded in space and time. To do this, time, space and the nature of interactions are not treated as additional dimensions of the problem, but as natural, inherent components of the very same generalised network description.

|

|

Ubhave: EPSRC Ubiquitous and Social Computing for Positive Behaviour Change (Ubhave). Ubhave's aim is to investigate the power and challenges of using mobile phones and social networking for Digital Behaviour Change Interventions (DBCIs), and to contribute to creating a scientific foundation for digitally supported behaviour change.

|

|

RECOGNITION: Relevance and Cognition for Self-Awareness in a Content-Centric Internet. |

|

C-AWARE: C-AWARE aims to build services to improve users' awareness of their personal energy consumption, and modify their energy demand. |

|

DDDN: Data Driven Declarative Networking. |

|

Spatio-Temporal Network Analysis: Exploiting the importance of both space and time in social network analysis. |

|

EmotionSense: A Mobile Phones based Adaptive Platform for Experimental Social Psychology Research |

|

ITA: International Technology Alliance in Network and Information Science |

|

INTERNET: INTERNET INTelligent Energy awaRe NETworks - a GreenICT project |

|

BRASIL: Characterizing Network-based Applications |

|

Swallow: Photonic Network and Computer Systems |

|

NetFPGA: Programmable Hardware for high-speed network prototypes |

Past projects

- Ciel: A Universal Execution Engine for Distributed Computation

- Skywriting: A Programming Language for the Cloud

- PURSUIT: Pursuing a Publish/Subscribe Future Internet

- Ubiquitous Interaction Devices: Building tomorrow's technology on today's hardware

- Adam: a DNS survey

- COMS: Cambridge Open Mobile Systems

- *probe: GRIDprobe and Nprobe network protocol analysis and monitoring

- Xen: A virtual machine monitor

- Xenoservers: An Open Infrastructure for Global Distributed Computing

- Haggle: Situated and Autonomic Communications

- TINA: The Intelligent Airport

- MASTS: Measurement at All Scales in Time and Space

- WildSensing: Monitoring Badgers

- FRESNEL: Federated Secure Sensor Network Laboratory

- FluPhone: Understanding Spread of Infectious Disease and Behavioural Responses

- EPSRC Molten:Mathematics of Large Technological Evolving Networks

- Space, Time and Social Ties: How Geographic Distance Shapes Online Social Networks

- Latency, Precision, Power, Privacy-aware Sensing and Computation for Mobile Devices

- 46PaQ: IPv4 & IPv6 Performance and QoS

- Pervasive debugging

- Practical lock-free data structures

- NetX: Miscellaneous Networking Projects and Downloads

- Service Level Agreements: Multi-level Assurance of ASP Performance

- Futuregrid: Systems Architecture for Next Generation Public Computing Platforms

- Pebbles: A Framework of Pebbles in Oxygen and Autohan

- Arsenic: User-accessible gigabit networking

- BT-URI: Management of Multi-Service Networks

- Compare: Experimental performance comparison of high speed networks

- DAN: Workstation Architecture

- DCAN: Devolved Control of ATM Networks

- Disk QoS: Enforcing Quality of

- Entropic: Quality of Service in networks

- Efficient Network Routeing: Towards Next-Generation Inter-AS Routeing

- Fairisle: Multi-Service Networks

- Fairisle II: Multi-Service Networks, continuation

- HATS: Security in wide area networks

- HAN: ATM services to and within the home

- LEARNet: shared ATM network testbed

- Measure: Quality of Service in Networks and Operating Systems

- NCAM: Network Control And Management

- Nemesis: General purpose Operating System support for multimedia

- OST: Optical Switch Testbed

- Pegasus: Operating System support for Multimedia

- Pegasus II: Operating System support for Multimedia, continuation

- Plan A: A coherent virtual IO system for a distributed computer