The FABO Database

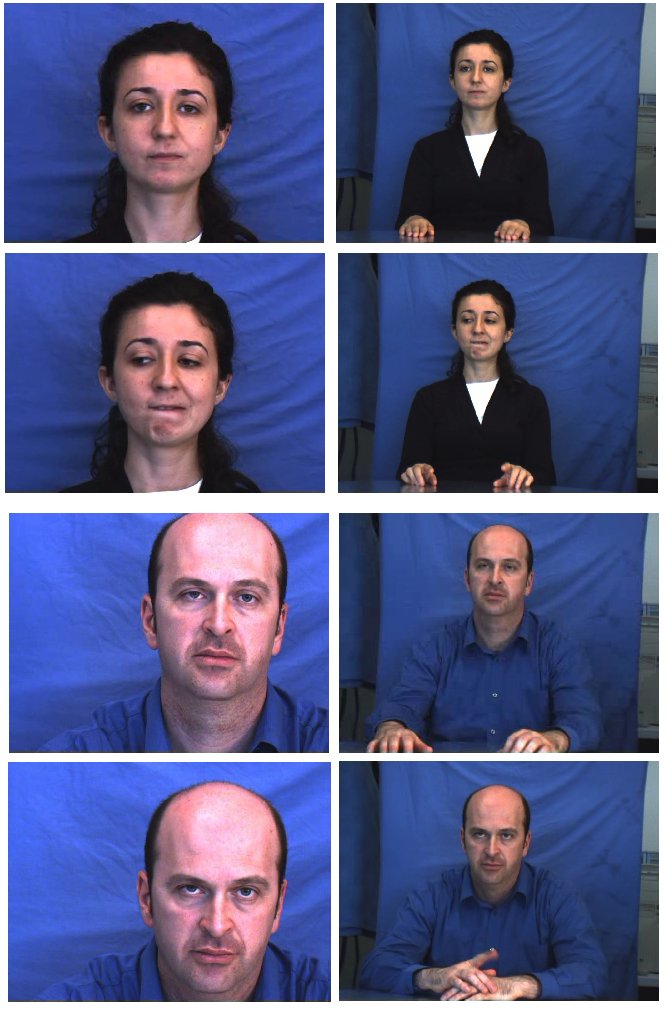

As an integral part of our research on

multimodal affective state recognition,

we created The Bimodal Face and Body Gesture Database (FABO)

for Automatic Analysis of Human Nonverbal Affective Behavior

at University of Technology, Sydney (UTS) in 2005. Posed

visual data was collected from volunteers in a laboratory

setting by asking and directing the participants on the

required actions and movements. The FABO database contains

videos of face and body expressions recorded by the face and

body cameras, simultaneously, as shown in the figures below.

This database is the first to date to combine face and body

displays in a truly bimodal manner, hence enabling significant

future progresses in affective computing research.

The goal of the FABO Database is to provide

a data source for the research in Affective Multi-modal Human

Computer Interaction. This database aims to help researchers

develop new techniques, technology, and algorithms for

automatic bimodal/multimodal recognition of human nonverbal

behaviour and affective states.

To advance the state-of-the-art in

multimodal affect recognition the FABO Database is made

available to researchers in affective computing field on a

case by case basis only. Prior to obtaining the database the researchers are required to sign the

FABO Database Release Agreement.

Details about the FABO database can be found in the following paper:

H. Gunes and M. Piccardi, ōA Bimodal Face and Body Gesture Database for Automatic

Analysis of Human Nonverbal Affective Behaviorö, in Proc. of ICPR 2006 the 18th

International Conference on Pattern Recognition, Vol. 1, pp. 1148-1153, Aug. 2006,

Hong Kong.