Evaluation clusters

Local cluster

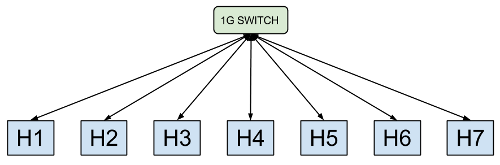

The image above shows the physical topology of our small dedicated test cluster. The cluster comprises of seven machines, connected via a 1G switch. Detailed information about machine hardware is in the table below.

Host hardware

| Host | Name | Architecture | Cores | Threads | Speed (GHz) | Memory (GBs) |

|---|---|---|---|---|---|---|

| H1 | freestyle | Intel Gainestown | 4 | 8 | 2.26 | 12 |

| H2 | hammerthrow | Intel Gainestown | 4 | 8 | 2.26 | 12 |

| H3 | backstroke | Intel Gainestown | 4 | 8 | 2.26 | 12 |

| H4 | tigger | AMD Magny Cours | 48 | 48 | 1.9 | 64 |

| H5 | uriel | AMD Valencia | 12 | 12 | 3.1 | 64 |

| H6 | michael | Intel Sandy Bridge | 12 | 24 | 1.9 | 64 |

| H7 | raphael | Intel Sandy Bridge | 12 | 24 | 1.9 | 64 |

EC2 cluster

For many of our experiments, we used large EC2 clusters of 100

m1.xlarge instances. We also used smaller sub-clusters of

16, 32 and 64 instances for back-end execution engines that only support

power-of-two node counts (e.g. PowerGraph).

All EC2 clusters ran in the us-east-1a availability zone.

We mostly ran our experiments during US night time in order to reduce

the variability in results (which can be up to +/- 100% in makespan at

other times).

VM specification

| Count | Type | vCPUs | Memory (GBs) |

|---|---|---|---|

| 100 | m1.xlarge | 4 | 15 |