Hidden Markov models¶

The underlying probability model is a hidden Markov model,

where $X_n$ is the location at timestep $n$ and $Y_n$ is the noisy observation.

We wish to compute the distribution of $X_n$ given observations $(y_0,\dots,y_n)$.

$$

\begin{eqnarray}

&X_0& \to &X_1& \to &X_2& \to \cdots\\

&\downarrow& &\downarrow& &\downarrow&\\

&Y_0& &Y_1& &Y_2&

\end{eqnarray}

$$

We can find this distribution iteratively. Suppose we know the distribution of $(X_{n-1} | y_0,\dots,y_{n-1})$.

Then we can find the distribution of $(X_n | y_0,\dots,y_{n-1})$ using the law of total probability and memorylessness,

$$

\Pr(x_n|y_0,\dots,y_{n-1}) = \sum_{x_{n-1}} \Pr(x_n |x_{n-1}) \Pr(x_{n-1}|y_0,\dots,y_{n-1})

$$

and then we can find the distribution of $(X_n | y_0,\dots,y_n)$ using Bayes's rule and memorylessness,

$$

\Pr(x_n|y_0,\dots,y_{n-1},y_n) = \text{const}\times\Pr(x_n|y_0,\dots,y_{n-1}) \Pr(y_n|x_n).

$$

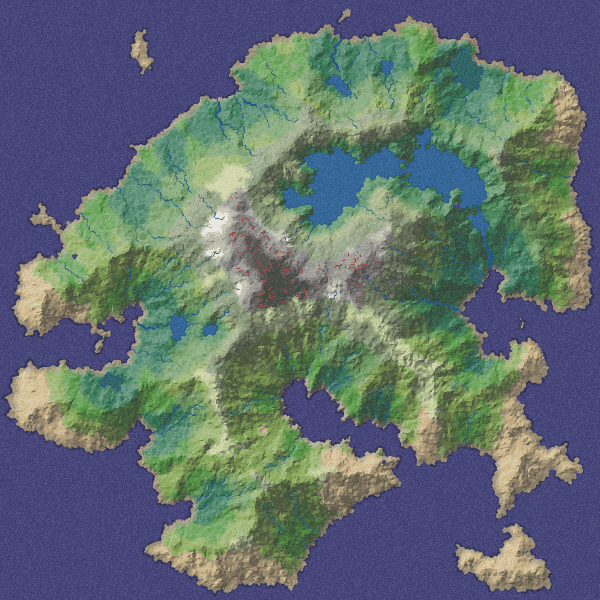

The method is laid out in Example Sheet 4, which you should attempt first, before coding. The example sheet asks you to solve the equations exactly, producing a vector of probabilities, $\Pr(x_n|y_0,\dots,y_n)$ for each location $x_n$ on the map. But when the map is large, then it's not practical to compute the sum nor the constant. Instead, we can use an empirical approximation.

Empirical approximation¶

The idea of empirical approximation is that, instead of working with probability distributions, we work with weighted samples, and we choose the weights so as approximate the distribution we're interested in. Formally, if we want to approximate the distribution of a random variable $Z$, and we have a collection of points $z_1,\dots,z_n$, we want weights so that

$$

\mathbb{P}(Z\in A) \approx \sum_{i=1}^n w_i 1_{z_i\in A}.

$$

We've used weighted samples in two ways in the course:

For the simple Monte Carlo approximation, we sample $z_i$ from $Z$, and we let all the weights be the same, $1/n$.

For computational Bayes's rule, we sample $z_i$ from the prior distribution, and let the weights be proportional to the likelihood of the data conditional on $z_i$.

This practical will use an empirical approximation technique called a particle filter.

In the particle filter, we'll use weighted samples to approximate the distributions of $(X_n | y_0,\dots,y_{n-1})$ and of $(X_n|y_0,\dots,y_n)$. Each sample is called a particle.