Computer Architecture Group

BIMPA Project: Biologically inspired massively parallel architectures

- computing beyond a million processors

Duration

This 5.5 year project finished in June 2014.

Motivation

The human brain remains as one of the great frontiers of science - how does this organ upon which we all depend so critically actually do its job? A great deal is known about the underlying technology - the neuron - and we can observe large-scale brain activity through techniques such as magnetic resonance imaging, but this knowledge barely starts to tell us how the brain works. Something is happening at the intermediate levels of processing that we have yet to begin to understand, but the essence of the brain's information processing function probably lies in these intermediate levels. To get at these middle layers requires that we build models of very large systems of spiking neurons, with structures inspired by the increasingly detailed findings of neuroscience, in order to investigate the emergent behaviours, adaptability and fault-tolerance of those systems.

Our goal in this project was to deliver machines of unprecedented cost-effectiveness for this task, and to make them readily accessible to as wide a user base as possible. We will also explored the applicability of the unique architecture that has emerged from the pursuit of this goal to other important application domains.

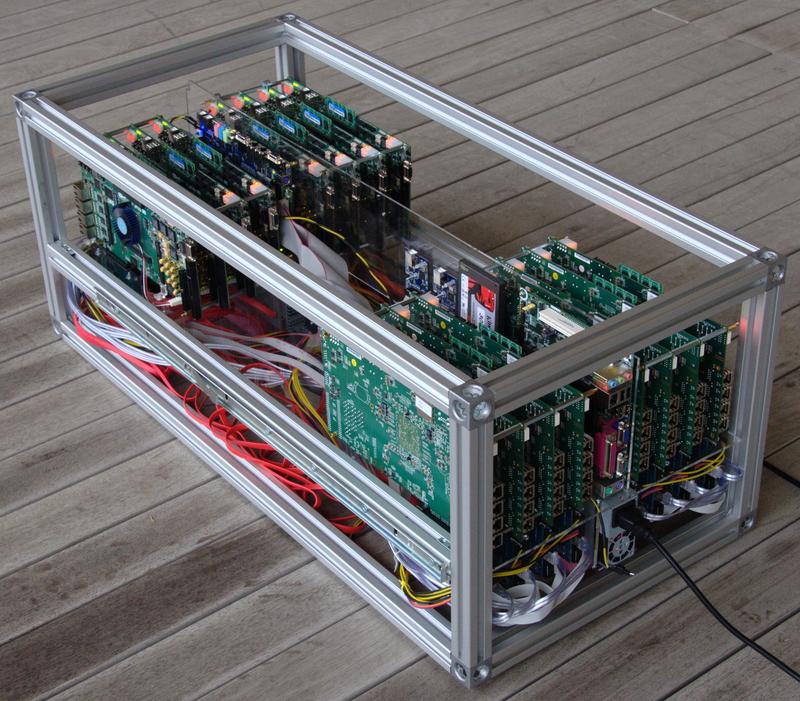

As part of this project we built the Bluehive neural network computer to provide a large system of FPGAs connected by high-speed low-latency links, which we believe is key to simulating neural networks with millions of neurons and billions of synaptic connections.

Collaboration

In Cambridge we are performing architectural exploration use large numbers of FPGAs to prototype systems.

The other partners on this project are:

- Prof. Steve Furber (University of Manchester APT Group) who is refining the SpiNNaker architecture.

- Prof. Andrew Brown (University of Southampton ESD group) who is looking at algorithm mapping.

- Prof. David Allerton (University of Sheffield ACSE group) who is investigating parallel languages.

Related eFutures Funding

We also received a small amount of funding to explore code generation for neural computation and held a workshop toward the end of this project.

Investigators

Funding

Funded by EPSRC - grant code EP/G015783/1

Key publications

Matthew Naylor and Simon W. Moore, Rapid codesign of a soft vector processor and its compiler, 24th International Conference on Field Programmable Logic and Applications (FPL2014), 2-4 September 2014. (PDF)

Paul J. Fox, A. Theodore Markettos, Simon W. Moore and Andrew W. Moore, Interconnect for commodity FPGA clusters: standardized or customized?, 24th International Conference on Field Programmable Logic and Applications (FPL2014), 2-4 September 2014. (PDF)

Paul J. Fox, A. Theodore Markettos and Simon W. Moore, Reliably Prototyping Large SoCs Using FPGA Clusters, 9th International Symposium on Reconfigurable Communication-centric Systems-on-Chip (ReCoSoC'2014), 26-28 May 2014.

Matthew Naylor, Paul J Fox, A Theodore Markettos and Simon W Moore, Managing the FPGA Memory Wall: Custom Computing or Vector Processing?, 23rd International Conference on Field Programmable Logic and Applications (FPL), Sep 2013

Simon W. Moore, Paul J. Fox, Steven J.T. Marsh, A. Theodore Markettos, Alan Mujumdar, Bluehive - A Field-Programmable Custom Computing Machine for Extreme-Scale Real-Time Neural Network Simulation 20th International Symposium of Field-Programmable Custom Computing Machines (FCCM), pages 133-140, April 2012 (PDF preprint) (DOI: 10.1109/FCCM.2012.32).

Video

A demo of a simple character recognition spiking neural network from the Nengo simulator, running on our DE4 FPGA Tablet.

(Other formats of this video are available from http://sms.cam.ac.uk/media/1446267.)