|

01. Moviestorm

From script to final cut, Moviestorm will take you from initial concept to finished movie. It's easy to use, full-featured and compatible with other movie making tools, making it suitable for first-time movie makers and advanced users alike

Contact: Matt Kelland

|

|

|

|

|

02. AlertMe Smart Energy

AlertMe will allow the user to monitor and control their whole home's energy

use - from electricity to heating and hot water use. Connected to the

Internet via their broadband connection, it continually measures and

controls appliances and monitors and manages overall energy use. Users can

view information online or via their mobile phone, adjust heating controls,

turn off switches and plan use for non-peak times. To be launched at the end

of the year, it will save up to 25% on energy bills and approximately one

tonne of CO2 per home each year.

|

|

|

03. SenseSurface

SenseSurface from Girton Labs, Cambridge, is an innovative of a 2mm

thin (plus battery) computer hardware platform for embedding in

paper, card or adhering to the human body. Sensesurface paper has

sensing functions like touch, motion, heat, data logging up to one

year and has visual and audio outputs. A new type of manufacturing

process eliminates the normal copper printed circuit board and so

makes the hybrid paper computer, very thin, flexible, writable and

robust. Novel environmental sensors allow very long battery life up to

one year. Applications include time sensitive Sticky Notes for

Alzheimer's patients, timed reminders for appointment cards for

business meetings, interactive business cards. Ordinary paper can have

computer functions built in, such as reminders to pay paper invoices.

As the computer is 2mm thin and can include motion sensing, it can be

attached as a sticky label for finding lost objects, e.g. mobile

phones. First applications will include medical applications for

sensing plasters on the skin.

|

|

|

04. Scent Whisper

Scent Whisper' is a responsive jewellery project that provides a new way to send a scented message by fusing emerging technologies with perfumery, to create a new level of experience and wellbeing, and as a novel communication system.

Contact: Dr Jenny Tillotson

|

|

|

05. Phonetic Arts 2009

Phonetic Arts delivers technology that generates natural expressive speech, allowing computer games to say any sentence in any type of voice. With world leading expertise in all aspects of speech technology, our goal is to free games developers from the constraints of recorded samples and allow them to create rich, expressive and responsive dialog within their games.

Contact: Steve Tyson

|

|

|

06. Cilla

Cilla is a prototype system for browsing digital photograph collections in an interactive and intuitive way. It is a deliberate attempt to break away from the "slide show" concept, displaying multiple images on the screen at any one time and allowing the user to guide the process depending on what interests them.

|

|

|

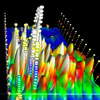

07. Imagetron

Imagetrons (of Visual Perception) are a set of image browsing methods in three dimensional space. They provide a way of browsing large sequences of images simultaneously on a computer display, such that the images and their sequence may be easily perceived and identified. Geometries found in nature like spirals and conic helixes are deployed to compose and present intuitive visualisations of image sequences.

Contact: Vangelis Pappas-Katsiafas

|

|

|

08. Gesture interface for large panel

Toshiba Research Europe has developed a hand pointing interface

that uses a single camera. Sample applications include a photo and video viewer as well as an application to inspect 3D models.

The system uses multiple trackers in a cascaded structure.

The idea is to apply the most precise method possible while being able to fall back to less precise but more robust trackers.

Contact: Bjorn Stenger

|

|

|

09. Emotion AI

For digital character content creators and developers who are dissatisfied with the cost and quality constraints that come with traditional animation systems; Dynamic Personality and Emotion Synthesis is an animation toolkit plugin that automates lifelike digital character content creation and a Software Development Kit that automates the control of run time character behavior.

Contact: Ian Wilson

|

|

|

|

|

10. Inclusive Design Simulators

Impairment simulation helps to understand how capability loss affects the ability to interact with a product or service, or perform normal everyday activities. This understanding enables people to make better informed decisions throughout the design process, so that the output can better satisfy the needs of more people. This interactive demonstration will include wearable impairment simulators that reduce the functional abilities of the eyes and hands, and software impairment simulators that modify image and sound files.

Contact:

|

|

|

|

|

11. Haptics while moving

Existing mobile information systems often require users to transfer

attention from their environment to a digital representation of the

location, which can be complex and frustrating. Our mobile prototype

allows people to explore their environment in a more 'heads up' way by

providing directional haptic feedback. By pointing and scanning a

mobile device the user can discover related digital information about

their surroundings, while still remaining immersed in the physical

world.

Contact: Simon Robinson

|

|

|

12. Designing Tangibles for Learning

Explore the physics of light by manipulating everyday objects on a DIY

interactive surface built for children.

Contact: Jennifer G. Sheridan

|

|

|

13. Healthradar

From Nokia Research Cambridge

|

|

|

14. MORPH

From Nokia Research Cambridge

Contact: Piers Andrew

|

|

|

15. ProFORMA: Probabilistic Feature-based On-line Rapid Model Acquisition

The generation of 3D models of real objects is very useful for many

computer vision applications. ProFORMA is a system designed to enable

on-line reconstruction of textured 3D objects rotated by a user's hand.

Partial models are created very rapidly and displayed to the user to aid

view planning, as well as used by the system to robustly track the object.

|

|

|

|

|

16. FrontlineSMS

FrontlineSMS is free open source software that turns a laptop and a mobile phone into a central communications hub. Once installed, the program enables users to send and receive text messages with large groups of people through mobile phones and without the need for the internet. Aimed at grassroots non-profits, FrontlineSMS is today being used in over 50 countries around the world in a wide range of social change activities

|

|

|

17. Vision-based Augmented Reality for Mobile Phones

Vision-based augmented reality for mobile phones can turn real objects

(such as simple printed posters) into active surfaces, containing

virtual 3D models and animations which appear to be attached to the

physical surface when viewed with a mobile phone camera. This

early-stage work focuses on the problem of recognising when a known

target is in the image and calculating the position of the camera to

enable the virtual objects to be rendered convincingly into the camera view.

|

|

|

18. VPlay

Built around a multi-touch surface, VPlay allows users to manipulate video in real time. Digital objects representing video clips, effects and mixers are displayed on the surface and can be easily arranged to create an ever changing video output. Designed to support the practice of VJing, or live video mixing, the novel user interface opens up new possibilities for collaboration and explores the use of touch interfaces in new environments.

Contact: Stuart Taylor

|

|

|

19. Anglia Ruskin University Digital Performance Laboratory

Musical performance technologies that restore the body to music, including

''Gaggle'ultrasound sensors, the 'Manta', a 48 pseudo-pressure sensitive interface,

the Arduino and Teabox: custom designed and off-the-shelf interfaces,

including 'Tedor' for manipulating EEG data into music-compatible

formats, for instance for use with audio programmes MaxMSP and

SuperCollider.

Contact: Richard Hoadley (Gaggle), Tom Hall (Manta)

or Krisztián Hofstädter (Tedor).

|

|

|

|

|

19. Digital Dance

Jane Turner's Troop company are creating a site-specific dance work for the festival, with live mixed music and visuals from the Cambridge community of composers and digital media researchers.

|

|

|

20. Low-Fi Skin Vision

The e-sense project, based at the Open University and University of

Edinburgh, is investigating how technology can extend our senses and

bodies. We are demonstrating a sensory substitution experiment where a

person can learn to see through their skin. Participants are blind-

folded and they have to try and hit a ball as it is rolled towards

them. A camera image of the ball and the person's hand is converted

into vibrations on the their abdomen. People generally learn very

quickly to sense the movement of the ball and bat it with their hand.

Contact: Jon Bird

|

|

|

|

|

21. LEGO Mindstorms for informed metrics in virtual worlds.

The research is a collaboration between Future University Hakodate,

Japan, Yokohama National University, Japan and Teesside University,

UK; funded by the UK Prime Minister's Initiative (PMI2) award and

JAIST, Japan. Virtual worlds such as Second Life are gaining much

interest among educators. However, successful implementation of

pedagogical practices and meaningful learning have yet to emerge. We

are using the collaborative construction of LEGO robots and circuits

in virtual worlds to research metrics for assessing synchronous and

asynchronous task processes and outcomes.

|

|

|

22. Active Bats

The Active Bat system.

Contact: Simon Hay

|

|

|

23. SpARC - Supplementary Assistance for Rowing Coaching

Sensing how people move their bodies, using gaming controllers as low

cost infra-red cameras and a novel system that responds to applied

pressure, allows the computer to begin to take some responsibility for

human-computer interaction by interpreting our behaviour. This work

investigates the potential for providing automated, reliable and high

fidelity supplementary feedback to athletes, specifically rowers in

conjunction with their coaches, on the quality of their technique and

how they could improve it.

Contact: Simon Fothergill

|

|

|

24. Proteus

Proteus is an interactive application that makes it straightforward even for novice users to obtain virtual three-dimensional models of real-world objects from digital video sequences. This is facilitated by the user tracing object outlines in key frames of the video, i.e. the user "paints" on the video. The process of doing so is supported by a novel guidance technique that interactively "snaps" the annotations to object outlines. As a result, Proteus can be used for rapid interactive capture of 3D models that can be used in virtual and augmented reality scenarios such as computer games, online worlds and urban modelling.

Contact: Malte Schwarzkopf

|

|

|

25. 'Magic Board Games' - Draughts Robot

This draughts-playing robot was built as a 2nd year Computer Science group project. It has an AI engine for human-vs-computer gameplay and uses a system of magnets to move pieces and detect user moves meaning the human player can interact with the game just as they would with a regular draughts board and human opponent.

|

|

|

|

|

26. bodyLAB

bodyLAB is a group of activities to help technology designers feel how people use their bodies when communicating. Through the experience gained in these exercises, we hope that our participants gain a larger repetoire of physical examples to draw upon when designing technology that is 'off the desk.'

|

|

|

27. ArgentVision

A third of elderly men & woman live on their own and have little contact

with friends and family - more than 3.6m people in the UK according to a

Help the Aged study from 2008. The study also found that isolation and

loneliness is increasing amongst the elderly - and that this is a major

contributing factor to declining health and quality of life. What can we do

about this? ArgentVision is a an innovative new technology startup that aims to connect

the online community with offline, elderly relatives.

|

|

|

28. BrickBox

BrickBox is a system built on a multi-user touch interface which shows,

as a proof of concept, how multiple users can collaborate when creating

CAD designs. BrickBox was the winner of the 2009 Cambridge computer science

undergraduate design competition.

|

|

|

29. Camvine CODA

CODA is a plug & play system for taking your digital information from

the Network - be it calendars, news feeds, twitter streams, live

reports, or videos - and putting it on screens without having to set

up and manage computers, controlled through a simple web interface.

CODA is not just about displaying dynamic content, but it's also

designed to allow flexible control - we'll be demonstrating some of

this flexibility via mobile and tangible interfaces.

|

|

|

|

|

|

30. Real-time and context-aware public transport information

Increasing amounts of real-time transport data are being collected, however

these data are often not easily accessible on the move. This project

explores the use of mobile phones as a delivery platform for real-time

public transport information in Cambridgeshire.

|

|

|

31. Haptic feedback assistance for motion impairment

Current interface design practices are based on user models and descriptions derived largely from studies of able-bodied users. However, such users are only one point on a wide and varied scale of physical capabilities. The overall aim of this research is to contribute to the enhancement of accessible input systems and interfaces. We now focus on investigations of software-based enhancements of cursor movement for all motion-impaired users, whether Situationally or health impaired and contrast this with haptic techniques derived from our previous work.

|

|

|

32. talks.cam.ac.uk

Talks.cam is a user-generated content system for publicising and

syndicating information about talks and seminars around Cambridge.

Talks.cam makes it easy to find information about events in Cambridge,

and for seminar organisers to publicise those events. Talks.cam also

feeds customised "what's on" information directly into the webpages of

many organisations in Cambridge. Talks.cam turns the university inside

out, accumulating research across disciplines, publicising and

archiving current research without requiring 'knowledge transfer'

intermediaries such as publishers and research funding offices.

Contact: Talks.cam webmaster

|

|

|

33. Voice-driven tourist information system

The latest statistical spoken dialogue system developed in the word-leading speech group in Cambridge University Engineering Department will be presented. This is an intelligent voice-driven tourist information system. Users can use natural language to talk to the computer, enquirying information such as bar, restaurant, hotel, etc. The system is adaptive to the change of user goals and can correct its own mistakes while the dialogue goes along. It can be accessed through phone, web browser and desktop programmes.

Contact: Dr. Kai Yu

|

|

|

34. NIKVision

NIKVision is a tabletop solution that allows kindergarten children to take the benefits of the new pedagogical possibilities that tangible interaction and tabletop technologies offer for manipulative learning. It uniquely combines low cost tangible interaction and tabletop technology with tutored learning. The design has been based on the observation of children using the technology, letting them freely play with our games during play sessions.

Contact: Javier Marco Rubio

|

|

|

35. MealPlanner

Unhealthy eating is a problem of great importance in the western

society, with obesity alone having recently been estimated to cost the

society 150 billion dollars per year in the USA, as well as causing

great individual individual suffering. In this demonstration we

present a prototype of a new meal planning system aimed at giving

individuals the knowledge and control they need for changing their

food-related behavior.

|

|

|

36. Enlighten

Enlighten is the only genuine real-time radiosity lighting product for

cross-platform game development. Enlighten means freedom to create

interactive worlds with fully dynamic lighting.

Enlighten redefines how lighting is handled and is driving a new

generation of games that truly rival film for their control of mood and

atmosphere.

|

|

|

|

|

37. Adaptable Scientific Summariser

A scientific paper summarisation system that can be adapted to

different target audiences (novices or experts). The current system

version works as a generic single-document sentence extract

summariser. It provides several sentence selection techniques based

on the sentence rhetorical status, lexical features and document

content words.

Contact: Marcis Pinnis

|

|

|

38. Vibrotactile information for speed regulation

Using mobile sports computers, such as heart rate monitors,

traditionally relies on visual and auditory feedback (i.e., LCD-display

and beeb alerts). However, while jogging in urban surroundings, for

example, the noise of the traffic can drown the beeb alerts and the need

for focusing the attention to the environment can prevent efficient use

of the visual display. In this sense, presenting information via the

sense of touch seems a promising way to proceed in developing safer and

more user-friendly devices for sports computer users. We demonstrate one

possible way to convey information with vibrating wrist band and chest

belt to regulate, for example, running speed during a physical exercise.

Contact: Jani Lylykangas

|

|

|

39. ArrayExpress

The Gene Expression Atlas is a semantically enriched database of meta-analysis based summary statistics over a curated subset of ArrayExpress Archive, servicing queries for condition-specific gene expression patterns as well as broader exploratory searches for biologically interesting genes/samples

Contact: European Bioinformatics Institute

|

|

|

40. BioCatalogue

BioCatalogue is a centralised registry of curated Life Science Web Services. It allows users to easily publish, discover, annotate and monitor Life Science Web Services. Over 1000 web services have currently been published in the catalogue.

Contact: European Bioinformatics Institute

|

|

|

41. The European Genome-phenome Archive (EGA)

The European Genome-phenome Archive is a service for permanent storage

and sharing of all types of personally identifiable genetic and

phenotypic data. The archive model is optimized for high security and

optimal performance for large data set, and it currently houses

information for more than 20 000 individuals. Strict protocols govern

how information is managed, stored and distributed. An independent

Ethics Committee audits the EGA protocols and infrastructure.

Contact: European Bioinformatics Institute

|

|

|

42. Space Maps Gene Expression Data Visualization

The Space Maps visualization technique enables biologists to

interactively explore such data sets to generate hypotheses, which can

be used to guide further analysis and interpretation of the data. This

novel technique visualizes the expression pattern of each gene as two-

dimensional image and knowledge- or data-derived similarity

relationships between the genes are represented by arranging the

corresponding images on the screen.

Contact: Nils Gehlenborg

|

|

|

|

|

43. Dasher

Dasher is an information-efficient text-entry interface. It can be driven

by continuous pointing gestures (e.g. head tracker) or a variety of button

modes. These and a version using the novel sensors of an iPhone will be

demonstrated.

|

|

|

44. ShapeWriter

ShapeWriter is an efficient and fluid text entry method for mobile

phones. To write words you simply slide your finger over a

touch-screen keyboard. It will be demonstrated on an iPhone.

|

|

|

45. Video Dasher

Video Dasher is a new concept in video navigation. Finding a

previously viewed scene using the standard time bar is time-consuming.

Video Dasher uses the powerful human visual processing system to make

video navigation more efficient.

|

|

|

|

|

46. OpenGazer

OpenGazer is an open source library that converts images captured from

an ordinary webcam into continuous or binary signals that can be used

as elementary computer interface devices. Simple gestures (such as

continuous head motions) can then be used to communicate using

programs such as Dasher and Nomon.

|

|

|

47. Nomon

Nomon provides a general way of using a single switch to communicate,

which is faster and requires fewer gestures than standard Grid

methods.

|

|

|

47a. Parakeet

Parakeet is a system for efficient mobile text entry using speech and a

touch-screen interface. Users enter text into their mobile Linux device

(such as the Nokia N800) by speaking into a Bluetooth microphone. The

user then reviews and corrects the recognizer's output using an

interface based on a word confusion network.

|

|

|

47b. Speech Dasher

Speech Dasher is a novel interface for the input of text using a

combination of speech and navigation via a pointing device (such as a

mouse). A speech recognizer provides the initial guess of the user's

desired text while a navigation-based interface allows the user to

confirm and correct the recognizer's output.

|

|

|

50. Emotionally Intelligent Interfaces

Existing human-computer interfaces are mind-blind - oblivious to the

user's mental states and intentions. Drawing inspiration from

psychology, computer vision and machine learning, our team in the

Computer Laboratory at the University of Cambridge has developed

mind-reading machines - computers that implement a computational model

to infer mental states of people from their facial signals. The goal is

to enhance human-computer interaction through empathic responses, to

improve the productivity of the user and to enable applications to

initiate interactions with and on behalf of the user, without waiting

for explicit input from that user.

Contact: Peter Robinson

|

|

|

51. Audio Networking

Audio networking revolves around the use of sound as a medium for

sending and receiving information, whether for human or electronic ears.

The aim of this project has been to demonstrate the feasibility of audio

networking on a mobile platform, namely Google's Android phones. An

application that transmutes pictures into sound has been developed, and

an interactive replacement for frustrating touch-tone phone menus is in

the works.

|

|

|

|

|

52. Emotional Robots

We will demonstrate a zoomorphic robot, Virgil, and a humanoid robot, Elvis, which are able to mimic head gestures and facial expressions in real time. The idea behind this research is to develop robots that are able to sense and appropriately respond to the emotions of people interacting with them, in order to help make interacting with them more natural.

Contact: Laurel Riek

|

|

|

|

|

53. CREATOR Capture

The CREATOR Capture project investigated creative applications of motion capture, and also the ways that value is created and captured during collaborations between technical and creative researchers. We are demonstrating an experimental platform that emerged from collaboration between the Cambridge Computer Laboratory Rainbow Group, and Culture Lab Newcastle.

|

|

|

|

|

54. The INtelligent Airport (TINA) Passive UHF RFID Distributed Antenna

Will be demonstrating a radio over fibre system supporting passive UHF RFID. Our approach allows a shared infrastructure to be used for both wireless sensing and communications (e.g. wifi / 3G) at the same time as enabling an expanded field for view for passive UHF RFID and an increased likelihood of tag detection.

Contact: Michael Crisp

|

|

|

|

|

55. Language learning on mobile phones

The mobile language learning application is being developed as one of the Computer Laboratory's Undergraduate Research Opportunities Projects. The application makes use of the smartphone multimedia features that can assist in language learning. It also enables users to collaboratively create and share the learning material using their phones.

Contact: Vytautas Vaitukaitis

|

|

|

56. Unwrap Mosaic

Contact: Pushmeet Kohli

|

|

|

57. Focal Ring Interaction

In focal ring interaction, the user's touch gestures are interpreted relative to a graphical ring that occupies the centre of the interface. By assigning different effects to strokes in to, out of, across, past, and around such rings, focal ring interfaces allow the user to dynamically switch between implicit interaction modes (e.g. panning and zooming a map) in the context of a single continuous stroke. This opens up new opportunities for casual, expressive, and powerful single touch interactions in applications where multi-touch is either unavailable, inappropriate, or used for higher-level interaction.

Contact: Darren Edge

|

|

|

59. weConnect

weConnect is an online service that enables mobile and desktop users to connect via exclusive and personal media channels. A person can easily create a media mix using images, text, and animations and broadcast the content to another person though a dedicated channel. The recipient can view the content live and retrospectively on the mobile or desktop. Connected with other services such as Amazon.com, weConnect enables users to compile a list of items they may like to purchase or recommend to others. As a research platform, weConnect enabled us to explore the effects of rich media broadcast among individuals in close relationships and social circles.

|

|

|

|

|

60. TouchSense Haptic (touch feedback) systems

Haptics (touch feedback) is the future of the user experience in

digital devices. Of the five senses, touch is the most proficient, the

only one capable of simultaneous input and output. Touch feedback

improves task performance, increases user satisfaction, and supplies a

greater sense of realism and enjoyment. Immersion provides touch feedback systems for mobile devices and larger touchscreens

|

|

|

62. LiveObject

LiveObject performs real time object recognition - it can recognise objects from a range of categories at 20 frames per second.

It is a learning system - it can learn about new objects by placing them in front of the camera and telling LiveObject what the object is. The system can often learn from just a few examples.

Contact: John Winn

|

|

|

|

|

63. TellTable: Creative Storytelling on Surface

TellTable utilises Microsoft Surface Technology to provide an interactive storytelling experience, helping to stimulate creativity and self-expression by children. The storyteller(s) can manipulate various digital characters and sceneries on Surface, which are created by capturing and editing real world elements using a camera. By doing so, they can narrate, act, and record the story in a lightweight way, similar to how children would tell stories using physical toys. TellTable enables children to create stories with unlimited imagination, and share them with others later on. It also encourages children to discover the physical world and collaborate with others as telling the story.

Contact: Xiang Cao

|

|

|

64. Bill Buxton's Interaction Classics

Historic videos of famous interactive systems from Bill Buxton's personal collection, including Sutherlands Sketchpad, Engelbart's NLS and others.

|

|

|

|

|

66. The PPA forest model

We now take it for granted that we use software simulations to build bridges, cars and planes. There is now an urgent need to develop accurate software simulations for various ecological systems, so that we can predict and mitigate the effects of climate change, and design better forms of agriculture. The PPA forest model allows accurate predictions of the annual-to-decade-to-century dynamics of forests, for example to forecast wood production, biodiversity and forest structure under alternative forest management scenarios.

|

|

|

68. HomeWatcher

Appliance sized visualization of home network use.

Contact: Tim Regan & Christos Gkantsidis

|

|

|

69. Dragonfly

Dragonfly is a powerful platform for building small, connected and interactive devices. The platform provides support for three key areas in developing fully functional devices: electronic circuit assembly, software development and form-factor design. Devices can be quickly assembled out of modular hardware units, programmed using the Microsoft .NET Micro framework, and given shape using 3D printing technologies

Contact: Nicolas Villar

|

|

|

70. SeeReal

SeeReal has developed scalable solutions to holographic 3D consumer

display products. Prototypes have been built using off-the-shelf

components demonstrating key principles of SeeReal's solution on 20"

high resolution, full colour, interactive 3D holograms. Contrary to stereo 3D which

inherently causes fatigue and eye strain for natural depth 3D scenes

(i.e. properly scaled depth), holographic 3D provides all viewing

information of a natural scene - including eye focus - and therefore

unlimited depth. Whoever can see 3D in real life, can see 3D on a

holographic 3D display without fatigue or other consumer risks.

|

|

|

72. SecondLight

SecondLight is a new surface-computing technology that can project images and detect gestures "in mid-air" above the display, in addition to supporting multitouch interactions on the surface. It works by using an electrically switchable liquid-crystal diffuser as the rear-projection display surface. This material is continually toggled between diffuse and clear states, so quickly that the switching is imperceptible. When it is diffuse, the system behaves like a regular surface computer, but when clear, it is possible to project into and image the area above the display surface. This enables magical new forms of interaction in which the UI is no longer bound to the display surface, but becomes part of the real world.

|

|

|

74. CDT Organic LED Displays

Demonstrating the highest resolution display produced to date by CDT's

Godmanchester development line. With Casio providing the active

matrix backplane, CDT ink jet printed its P-OLED materials to create a

stunning 3" diagonal, full colour, wide-QVGA (420x240) display.

|

|

|

ReadYourMeter.org

ReadYourMeter.org aims to help people to record and explain the energy

consumption of buildings. This then allows them to determine whether

changes they make in their behaviour reduce their energy consumption or

increase it.

|

|